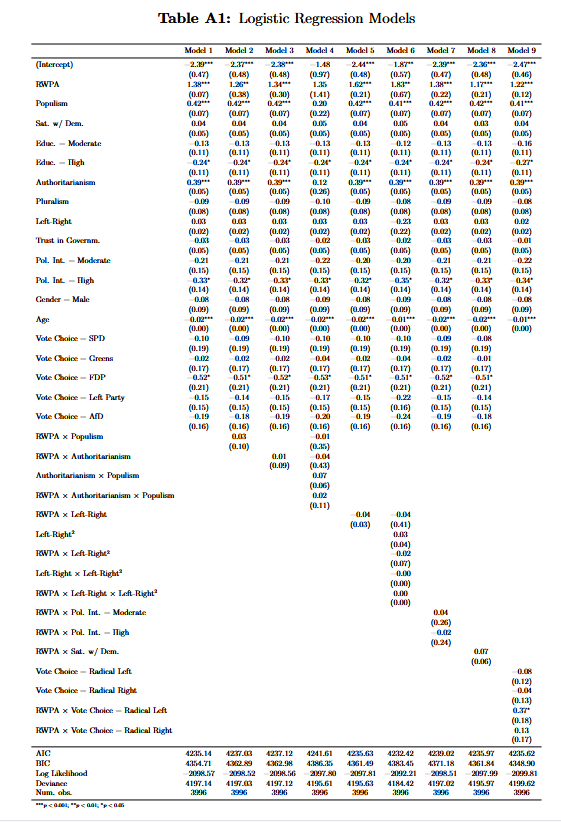

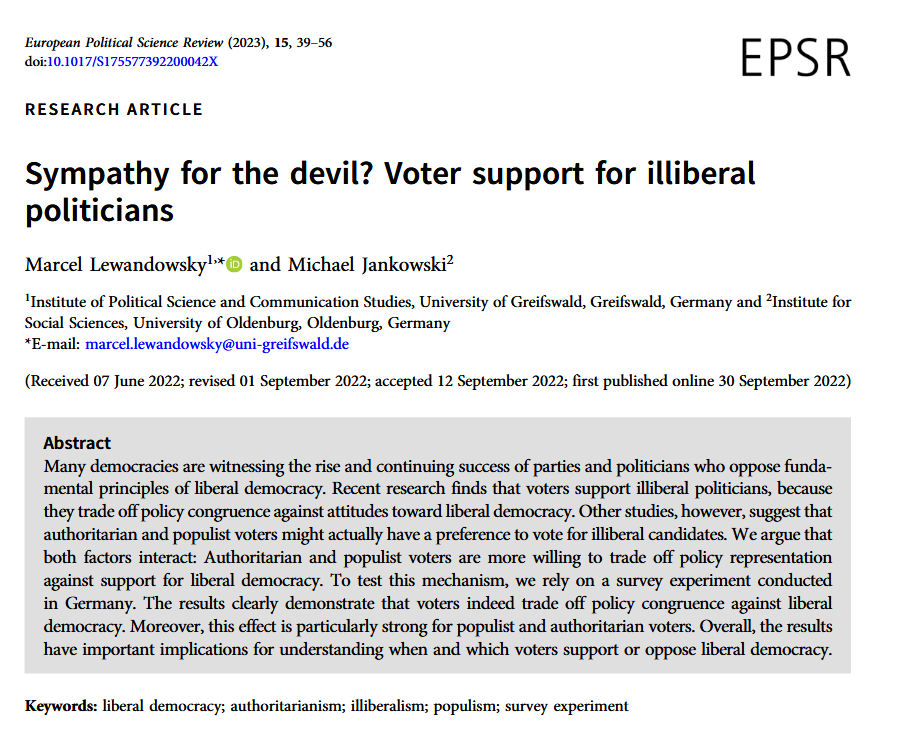

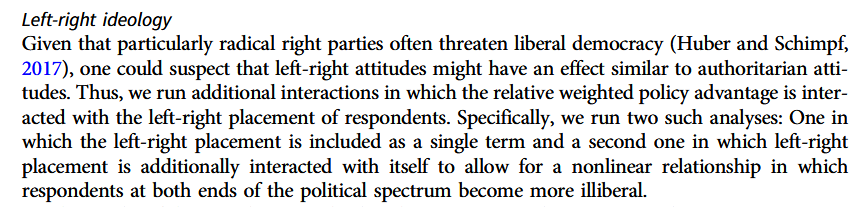

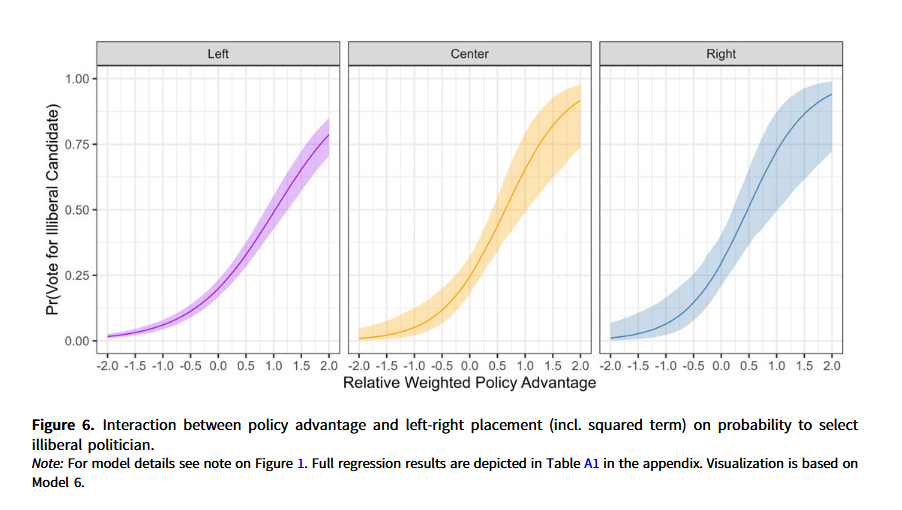

class: center, middle, inverse, title-slide .title[ # 15: Transformations. ] .subtitle[ ## Linear Models ] .author[ ### <large>Jaye Seawright</large> ] .institute[ ### <small>Northwestern Political Science</small> ] .date[ ### Feb. 25, 2026 ] --- class: center, middle <style type="text/css"> pre { max-height: 400px; overflow-y: auto; } pre[class] { max-height: 200px; } </style> Measurement choices can affect regression results in a lot of ways, but we will focus on three: 1. Scaling and intepretation 2. Nonlinear transformations 3. Stabilizing variance --- ###Example The relationship between GDP and democracy isn't linear. log(GDP) often works better. --- ###Linear Transformations: Scaling and Standardizing The coefficient `\((\beta_j)\)` represents: *"Average change in Y per 1-unit change in X"* But... 1 unit in what scale? Inches vs. centimeters vs. miles? --- ``` r # Simulated height-earnings example (from ROS Chapter 12) set.seed(123) n <- 1000 height_in <- rnorm(n, mean = 66.6, sd = 3.8) # height in inches height_cm <- height_in * 2.54 # height in centimeters # True relationship: earnings increase 6% per SD of height earnings <- exp(9.55 + 0.06*scale(height_in)[,1] + rnorm(n, 0, 0.87)) # Compare coefficients m_inch <- lm(earnings ~ height_in) m_cm <- lm(earnings ~ height_cm) heightms <- modelsummary(list("Inches" = m_inch, "Centimeters" = m_cm), stars = TRUE, escape=TRUE, gof_omit = ".*", output = "kableExtra")%>% kable_styling(font_size = 14) ``` --- <table style="NAborder-bottom: 0; width: auto !important; margin-left: auto; margin-right: auto; font-size: 14px; margin-left: auto; margin-right: auto;" class="table table"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:center;"> &nbsp;Inches </th> <th style="text-align:center;"> Centimeters </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> (Intercept) </td> <td style="text-align:center;"> −27981.301* </td> <td style="text-align:center;"> −27981.301* </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (13201.360) </td> <td style="text-align:center;"> (13201.360) </td> </tr> <tr> <td style="text-align:left;"> height_in </td> <td style="text-align:center;"> 742.870*** </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (197.721) </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> height_cm </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> 292.469*** </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> (77.843) </td> </tr> </tbody> <tfoot><tr><td style="padding: 0; " colspan="100%"> <sup></sup> + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001</td></tr></tfoot> </table> --- ###Solution: Standardize to make coefficients comparable: * z-scores: `\((z = (x - \bar{x})/sd(x))\)` * Gelman's preference: Divide by 2 SDs (makes comparable to binary variables) --- ``` r library(rosdata) library(modelsummary) data(kidiq) # from ROS package # Problematic: Main effects at X=0 m_raw <- lm(kid_score ~ mom_hs + mom_iq + mom_hs:mom_iq, data = kidiq) # Better: Center continuous variable kidiq$c_mom_iq <- kidiq$mom_iq - mean(kidiq$mom_iq) m_centered <- lm(kid_score ~ mom_hs + c_mom_iq + mom_hs:c_mom_iq, data = kidiq) kidiqms <- modelsummary( list("Raw (hard to interpret)" = m_raw, "Centered (interpretable)" = m_centered), stars = TRUE, output = "kableExtra" ) %>% kable_styling(font_size = 12) ``` --- <table style="NAborder-bottom: 0; width: auto !important; margin-left: auto; margin-right: auto; font-size: 12px; margin-left: auto; margin-right: auto;" class="table table"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:center;"> Raw (hard to interpret) </th> <th style="text-align:center;"> Centered (interpretable) </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> (Intercept) </td> <td style="text-align:center;"> −11.482 </td> <td style="text-align:center;"> 85.407*** </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (13.758) </td> <td style="text-align:center;"> (2.218) </td> </tr> <tr> <td style="text-align:left;"> mom_hs </td> <td style="text-align:center;"> 51.268*** </td> <td style="text-align:center;"> 2.841 </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (15.338) </td> <td style="text-align:center;"> (2.427) </td> </tr> <tr> <td style="text-align:left;"> mom_iq </td> <td style="text-align:center;"> 0.969*** </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (0.148) </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> mom_hs × mom_iq </td> <td style="text-align:center;"> −0.484** </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (0.162) </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> c_mom_iq </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> 0.969*** </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> (0.148) </td> </tr> <tr> <td style="text-align:left;"> mom_hs × c_mom_iq </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> −0.484** </td> </tr> <tr> <td style="text-align:left;box-shadow: 0px 1.5px"> </td> <td style="text-align:center;box-shadow: 0px 1.5px"> </td> <td style="text-align:center;box-shadow: 0px 1.5px"> (0.162) </td> </tr> <tr> <td style="text-align:left;"> Num.Obs. </td> <td style="text-align:center;"> 434 </td> <td style="text-align:center;"> 434 </td> </tr> <tr> <td style="text-align:left;"> R2 </td> <td style="text-align:center;"> 0.230 </td> <td style="text-align:center;"> 0.230 </td> </tr> <tr> <td style="text-align:left;"> R2 Adj. </td> <td style="text-align:center;"> 0.225 </td> <td style="text-align:center;"> 0.225 </td> </tr> <tr> <td style="text-align:left;"> AIC </td> <td style="text-align:center;"> 3745.1 </td> <td style="text-align:center;"> 3745.1 </td> </tr> <tr> <td style="text-align:left;"> BIC </td> <td style="text-align:center;"> 3765.5 </td> <td style="text-align:center;"> 3765.5 </td> </tr> <tr> <td style="text-align:left;"> Log.Lik. </td> <td style="text-align:center;"> −1867.543 </td> <td style="text-align:center;"> −1867.543 </td> </tr> <tr> <td style="text-align:left;"> F </td> <td style="text-align:center;"> 42.839 </td> <td style="text-align:center;"> 42.839 </td> </tr> <tr> <td style="text-align:left;"> RMSE </td> <td style="text-align:center;"> 17.89 </td> <td style="text-align:center;"> 17.89 </td> </tr> </tbody> <tfoot><tr><td style="padding: 0; " colspan="100%"> <sup></sup> + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001</td></tr></tfoot> </table> --- Interpretation before centering: mom_hs coefficient: Effect when mom_iq = 0 (nonsensical!) After centering: mom_hs coefficient: Effect at average mom_iq (meaningful!) --- ###The Logarithm: Workhorse Transformation When to Use: Positive variables with right skew (GDP, income, population, campaign spending) --- Three Common Forms: `\(log(Y) \sim X\)` (log-linear): "1-unit increase in X → constant % change in Y" `\(Y \sim log(X)\)` (linear-log): "1% increase in X → constant additive change in Y" `\(log(Y) \sim log(X)\)` (log-log): Constant elasticity (economics favorite) --- <img src="Transformations_files/figure-html/unnamed-chunk-6-1.png" width="80%" style="display: block; margin: auto;" /> --- ###Interpreting Logged Coefficients Rule of thumb for small coefficients `\((|\beta| < 0.1)\)`: `\(exp(\beta)\approx1+\beta\)` --- For any coefficient: ``` r # Example from ROS: earnings ~ height (log scale) beta <- 0.06 percent_change <- (exp(beta) - 1) * 100 cat("A coefficient of", beta, "on the log scale corresponds to a", round(percent_change, 1), "% increase on the original scale.\n") ``` ``` ## A coefficient of 0.06 on the log scale corresponds to a 6.2 % increase on the original scale. ``` ``` r # For larger coefficients beta_large <- 0.37 percent_large <- (exp(beta_large) - 1) * 100 cat("\nA coefficient of", beta_large, "corresponds to a", round(percent_large, 1), "% increase (not 37%!).") ``` ``` ## ## A coefficient of 0.37 corresponds to a 44.8 % increase (not 37%!). ``` --- ###Always remember: Predictions on log scale: `\((\hat{\log(y)} = X \beta)\)` Back to original scale: `\((\hat{y} = \exp(X \beta))\)` --- ``` r # Prepare data library(dplyr) library(tidyverse) democracy_data <- qogts %>% filter(year == 2020) %>% select(country = cname, libdem = vdem_libdem, gdp_pc = wdi_gdpcappppcon2017) %>% drop_na() %>% filter(gdp_pc > 0, libdem > 0) # Fit three different models m_linear <- lm(libdem ~ gdp_pc, data = democracy_data) m_logx <- lm(libdem ~ log(gdp_pc), data = democracy_data) m_loglog <- lm(log(libdem) ~ log(gdp_pc), data = democracy_data) # Compare dem.ms <- modelsummary( list("Linear" = m_linear, "Log-X" = m_logx, "Log-Log" = m_loglog), stars = TRUE, gof_map = c("nobs", "r.squared", "rmse"), output="kableExtra" )%>% kable_styling(font_size = 14) ``` --- <table style="NAborder-bottom: 0; width: auto !important; margin-left: auto; margin-right: auto; font-size: 14px; margin-left: auto; margin-right: auto;" class="table table"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:center;"> Linear </th> <th style="text-align:center;"> &nbsp;Log-X </th> <th style="text-align:center;"> Log-Log </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> (Intercept) </td> <td style="text-align:center;"> 0.300*** </td> <td style="text-align:center;"> −0.627*** </td> <td style="text-align:center;"> −3.548*** </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (0.024) </td> <td style="text-align:center;"> (0.136) </td> <td style="text-align:center;"> (0.462) </td> </tr> <tr> <td style="text-align:left;"> gdp_pc </td> <td style="text-align:center;"> 0.000*** </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> </td> <td style="text-align:center;"> (0.000) </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> </td> </tr> <tr> <td style="text-align:left;"> log(gdp_pc) </td> <td style="text-align:center;"> </td> <td style="text-align:center;"> 0.112*** </td> <td style="text-align:center;"> 0.261*** </td> </tr> <tr> <td style="text-align:left;box-shadow: 0px 1.5px"> </td> <td style="text-align:center;box-shadow: 0px 1.5px"> </td> <td style="text-align:center;box-shadow: 0px 1.5px"> (0.014) </td> <td style="text-align:center;box-shadow: 0px 1.5px"> (0.049) </td> </tr> <tr> <td style="text-align:left;"> Num.Obs. </td> <td style="text-align:center;"> 164 </td> <td style="text-align:center;"> 164 </td> <td style="text-align:center;"> 164 </td> </tr> <tr> <td style="text-align:left;"> R2 </td> <td style="text-align:center;"> 0.245 </td> <td style="text-align:center;"> 0.271 </td> <td style="text-align:center;"> 0.148 </td> </tr> <tr> <td style="text-align:left;"> RMSE </td> <td style="text-align:center;"> 0.22 </td> <td style="text-align:center;"> 0.22 </td> <td style="text-align:center;"> 0.73 </td> </tr> </tbody> <tfoot><tr><td style="padding: 0; " colspan="100%"> <sup></sup> + p < 0.1, * p < 0.05, ** p < 0.01, *** p < 0.001</td></tr></tfoot> </table> --- Which is best? Let's check residuals... ``` r # Residual plots p1 <- ggplot(democracy_data, aes(x = gdp_pc, y = resid(m_linear))) + geom_point(alpha = 0.5) + geom_smooth(se = FALSE, method = "loess", color = "red") + labs(title = "Linear Model Residuals", x = "GDP per capita", y = "Residuals") + theme_minimal() p2 <- ggplot(democracy_data, aes(x = log(gdp_pc), y = resid(m_logx))) + geom_point(alpha = 0.5) + geom_smooth(se = FALSE, method = "loess", color = "red") + labs(title = "Log-X Model Residuals", x = "log(GDP per capita)", y = "Residuals") + theme_minimal() p3 <- ggplot(democracy_data, aes(x = log(gdp_pc), y = resid(m_loglog))) + geom_point(alpha = 0.5) + geom_smooth(se = FALSE, method = "loess", color = "red") + labs(title = "Log-Log Model Residuals", x = "log(GDP per capita)", y = "Residuals") + theme_minimal() ``` --- ``` r (p1 | p2) / p3 ``` <img src="Transformations_files/figure-html/unnamed-chunk-11-1.png" width="70%" style="display: block; margin: auto;" /> --- <table class="table table-striped table-hover table-condensed" style="font-size: 12px; width: auto !important; margin-left: auto; margin-right: auto;"> <caption style="font-size: initial !important;">Common Statistical Transformations</caption> <thead> <tr> <th style="text-align:left;"> Transformation </th> <th style="text-align:left;"> When to Use </th> <th style="text-align:left;"> R Code </th> <th style="text-align:left;"> Interpretation </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;width: 5em; font-weight: bold;"> Square root </td> <td style="text-align:left;"> Count data, moderate right skew </td> <td style="text-align:left;"> `sqrt(y) ~ x` </td> <td style="text-align:left;"> Compresses high values less severely than log </td> </tr> <tr> <td style="text-align:left;width: 5em; font-weight: bold;"> Inverse </td> <td style="text-align:left;"> Extreme right skew, rates </td> <td style="text-align:left;"> `1/y ~ x` </td> <td style="text-align:left;"> Reciprocal relationship </td> </tr> <tr> <td style="text-align:left;width: 5em; font-weight: bold;"> Polynomial </td> <td style="text-align:left;"> U-shaped relationships </td> <td style="text-align:left;"> `y ~ x + I(x^2)` </td> <td style="text-align:left;"> Curvilinear effects (but beware of overfitting!) </td> </tr> <tr> <td style="text-align:left;width: 5em; font-weight: bold;"> Logit </td> <td style="text-align:left;"> Proportions (0-1) </td> <td style="text-align:left;"> `qlogis(p) ~ x` </td> <td style="text-align:left;"> Log-odds scale for probabilities </td> </tr> </tbody> </table> --- ``` r # Visual comparison x <- seq(1, 100, length.out = 100) df <- data.frame( x = x, linear = 10 + 0.5*x, sqrt = (10 + 0.5*x)^2, # Inverse of sqrt transform log = exp(2 + 0.03*x) ) transformationcomp <- ggplot(df %>% tidyr::pivot_longer(-x), aes(x = x, y = value, color = name)) + geom_line(linewidth = 1) + scale_color_brewer(palette = "Set1") + labs(title = "Different Transformations Compress High Values Differently", x = "X", y = "Y (original scale)", color = "Transformation") + theme_minimal() + theme(legend.position = "bottom") ``` --- <img src="Transformations_files/figure-html/unnamed-chunk-13-1.png" width="70%" style="display: block; margin: auto;" /> --- ###Transformations vs. Generalized Linear Models (GLMs) Transformations: Modify data to fit linear model ``` r lm(log(y) ~ x) # Log transformation ``` --- ###Transformations vs. Generalized Linear Models (GLMs) GLMs: Modify model to fit data ``` r glm(y ~ x, family = poisson) # Count data glm(y ~ x, family = binomial) # Binary data glm(y ~ x, family = Gamma(link="log")) # Positive continuous ``` --- Trade-offs: 1. Transformations: Simpler, easier to explain, works for many continuous outcomes 2. GLMs: More theoretically appropriate for binary/count outcomes * Gelman & Hill's advice: Start with transformations for continuous outcomes, move to GLMs if needed. --- #Building Models with Transformations: A Workflow ``` r # 1. VISUALIZE the raw relationship ggplot(data, aes(x = gdp, y = democracy)) + geom_point() + geom_smooth(method = "loess") # 2. TRY different transformations m1 <- lm(democracy ~ gdp, data = data) m2 <- lm(democracy ~ log(gdp), data = data) m3 <- lm(log(democracy) ~ gdp, data = data) m4 <- lm(log(democracy) ~ log(gdp), data = data) # 3. CHECK residuals (should be patternless) plot(resid(m2) ~ predict(m2)) # Ideally: random scatter # 4. INTERPRET coefficients # For m2: effect of 1% GDP increase = beta/100 units democracy # For m4: elasticity = beta% democracy change per 1% GDP change # 5. VALIDATE predictions on original scale data$pred_m2 <- predict(m2) data$pred_m4 <- exp(predict(m4)) # Remember to exponentiate! # 6. COMPARE models library(performance) compare_performance(m1, m2, m3, m4, metrics = c("RMSE", "R2")) Advanced: Interactions with Transformed Variables Golden Rule: Center continuous variables before creating interactions! ``` --- ###Zeros and Negatives Some transformations fail at certain ranges. `\(\log(0) = -\infty\)`, `\(\log(\text{negative}) = \text{undefined}\)` Solutions: 1. `\(\log(x + 1)\)` to eliminate zeros 2. asinh(x) (inverse hyperbolic sine), a similar transformation that is defined for negative numbers --- ###When NOT to Transform 1. When original scale is substantively meaningful 2. When transformation obscures more than it reveals 3. Minor improvements in fit aren't worth interpretive complexity 4. When alternative approaches (GLMs, nonparametric models) are better --- ###Practical R Tools ``` r # Diagnostic plotting library(ggplot2) ggplot(data, aes(x = gdp, y = democracy)) + geom_point() + geom_smooth(method = "lm") + scale_x_log10() + # Quick visual check scale_y_log10() # Model comparison table library(modelsummary) models <- list("Linear" = m1, "Log-X" = m2, "Log-Log" = m3) modelsummary(models, stars = TRUE, output = "kableExtra") # Marginal effects after transformation library(margins) margins(m2, at = list(gdp = c(1000, 10000, 100000))) # Automatic transformation suggestion library(MASS) boxcox(m1) # Suggests optimal power transformation # Easy standardization data$z_gdp <- scale(data$gdp)[,1] # z-scores data$gdp_2sd <- data$z_gdp / 2 # Gelman's divide by 2 SDs ``` ---  ---  ---  ---