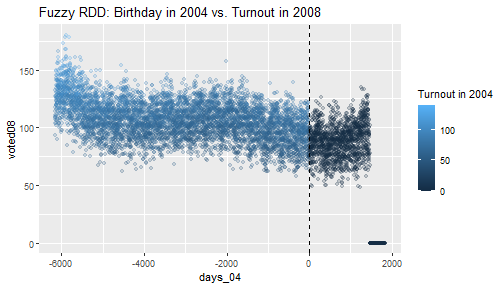

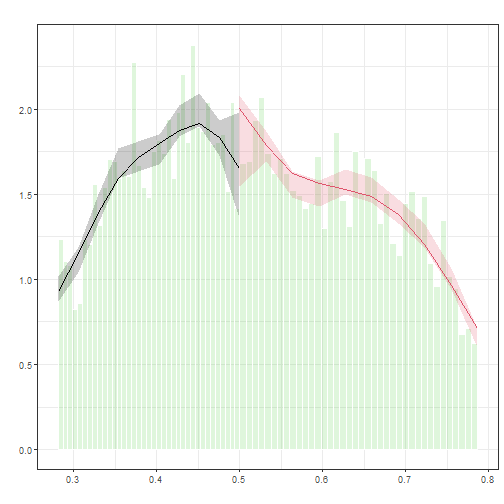

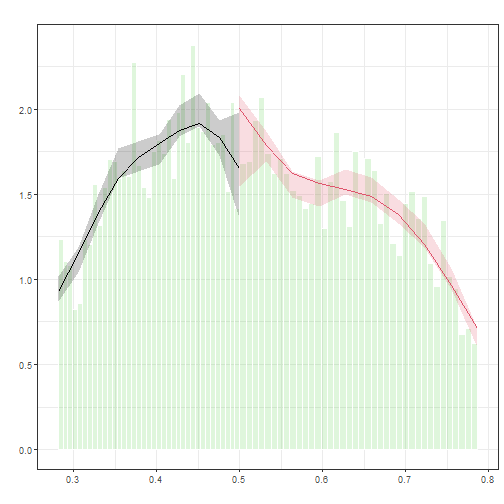

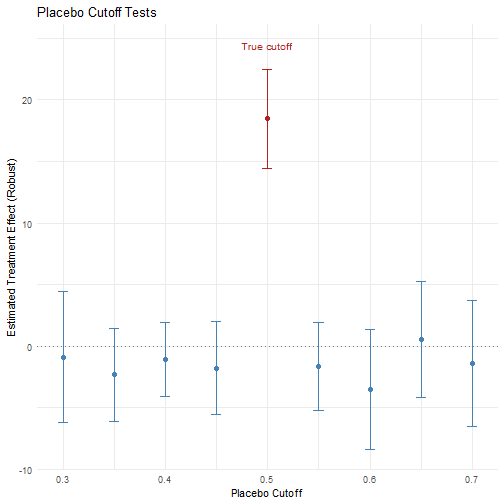

class: center, middle, inverse, title-slide .title[ # 5: Regression Discontinuity Designs ] .subtitle[ ## Quantitative Causal Inference ] .author[ ### <large>Jaye Seawright</large> ] .institute[ ### <small>Northwestern Political Science</small> ] .date[ ### April 30 and May 5, 2026 ] --- class: center, middle <style type="text/css"> pre { max-height: 400px; overflow-y: auto; } pre[class] { max-height: 200px; } </style> ###Today's Plan 1. Why RDD? 2. RDD assumptions 3. Bandwidth selection 4. Sharp vs. fuzzy RDD 5. Visualization 6. Estimation strategies 7. Diagnostics 8. Reporting --- ### Why RDD? Connecting to What We Know - In week 3 we saw that RDD exploits a threshold rule to create a local natural experiment. Today we get into the details of how that actually works in practice. - Like IV, RDD sidesteps selection on unobservables, but instead of an instrument that shifts treatment probability, the threshold deterministically (or near-deterministically) assigns treatment. --- ### Why RDD? (cont.) - The key tradeoff relative to IV: RDD doesn't require finding an external instrument, but the estimates are local to units near the cutoff. Whether that local effect is the one you care about is a substantive question worth asking about any application. (This is similar to whether the estimates local to the effects of an instrument are worthwhile.) - Unlike DiD (next week's topic), there's no parallel trends assumption — but there is the continuity assumption, which we'll examine carefully today. --- ### The Key Assumption Recall from week 3: *Continuity Assumption*: The conditional expectations of potential outcomes `\(\text{E}[Y_C|X]\)` and `\(\text{E}[Y_T|X]\)` are continuous in the assignment variable `\(X\)` in the neighborhood of the cutoff `\(c\)`. * Under this assumption, an observed discontinuity at `\(c\)` is going to be a plausible estimator of the local treatment effect. --- <img src="images/Lee1.png" width="90%" style="display: block; margin: auto;" /> --- <img src="images/Lee2.png" width="90%" style="display: block; margin: auto;" /> --- ``` r #This coding example due to Yuta Toyama. demmeans <- split(lmb_data$democrat, cut(lmb_data$lagdemvoteshare, 100)) %>% lapply(mean) %>% unlist() agg_lmb_data <- data.frame(democrat = demmeans, lagdemvoteshare = seq(0.01,1, by = 0.01)) ``` --- ``` r lmb_data <- lmb_data %>% mutate(gg_group = if_else(lagdemvoteshare > 0.5, 1,0)) gg_srd = ggplot(data=lmb_data, aes(lagdemvoteshare, democrat)) + geom_point(aes(x = lagdemvoteshare, y = democrat), data = agg_lmb_data) + xlim(0,1) + ylim(-0.1,1.1) + geom_vline(xintercept = 0.5) + xlab("Democrat Vote Share, time t") + ylab("Probability of Democrat Win, time t+1") + scale_y_continuous(breaks=seq(0,1,0.2)) + ggtitle(TeX("Effect of Initial Win on Winning Next Election: $\\P^D_{t+1} - P^R_{t+1}$")) ``` --- ``` r gg_srd + stat_smooth(aes(lagdemvoteshare, democrat, group = gg_group), method = "lm" , formula = y ~ x + I(x^2)) ``` <img src="5regressiondiscontinuitydesigns_files/figure-html/unnamed-chunk-7-1.png" width="70%" style="display: block; margin: auto;" /> --- ``` r gg_srd + stat_smooth(data=lmb_data %>% filter(lagdemvoteshare>.45 & lagdemvoteshare<.55), aes(lagdemvoteshare, democrat, group = gg_group), method = "lm", formula = y ~ x + I(x^2)) ``` <img src="5regressiondiscontinuitydesigns_files/figure-html/unnamed-chunk-8-1.png" width="70%" style="display: block; margin: auto;" /> --- <img src="5regressiondiscontinuitydesigns_files/figure-html/unnamed-chunk-9-1.png" width="50%" style="display: block; margin: auto;" /> --- ``` r lmb_subset <- lmb_data %>% filter(lagdemvoteshare>.48 & lagdemvoteshare<.52) lm_1 <- lm_robust(score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") summary(lm_1) ``` ``` ## ## Call: ## lm_robust(formula = score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") ## ## Standard error type: HC1 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) CI Lower CI Upper DF ## (Intercept) 31.20 1.334 23.39 3.788e-95 28.58 33.81 913 ## lagdemocrat 21.28 1.951 10.91 3.987e-26 17.45 25.11 913 ## ## Multiple R-squared: 0.1152 , Adjusted R-squared: 0.1142 ## F-statistic: 119 on 1 and 913 DF, p-value: < 2.2e-16 ``` --- ``` ## ## Call: ## lm_robust(formula = score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") ## ## Standard error type: HC1 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) CI Lower CI Upper DF ## (Intercept) 31.20 1.334 23.39 3.788e-95 28.58 33.81 913 ## lagdemocrat 21.28 1.951 10.91 3.987e-26 17.45 25.11 913 ## ## Multiple R-squared: 0.1152 , Adjusted R-squared: 0.1142 ## F-statistic: 119 on 1 and 913 DF, p-value: < 2.2e-16 ``` --- ### A Second Example <img src="images/Dahlgaard1.png" width="90%" style="display: block; margin: auto;" /> --- <img src="images/Dahlgaard2.png" width="70%" style="display: block; margin: auto;" /> --- ### RDD Windows - How wide a window above and below the break point? --- ``` r lmb_subset <- lmb_data %>% filter(lagdemvoteshare>.49 & lagdemvoteshare<.51) lm_1 <- lm_robust(score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") summary(lm_1) ``` ``` ## ## Call: ## lm_robust(formula = score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") ## ## Standard error type: HC1 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) CI Lower CI Upper DF ## (Intercept) 31.71 1.938 16.358 4.539e-47 27.90 35.52 428 ## lagdemocrat 23.97 2.799 8.564 1.985e-16 18.47 29.47 428 ## ## Multiple R-squared: 0.1453 , Adjusted R-squared: 0.1433 ## F-statistic: 73.34 on 1 and 428 DF, p-value: < 2.2e-16 ``` --- ``` r lmb_subset <- lmb_data %>% filter(lagdemvoteshare>.495 & lagdemvoteshare<.505) lm_1 <- lm_robust(score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") summary(lm_1) ``` ``` ## ## Call: ## lm_robust(formula = score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") ## ## Standard error type: HC1 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) CI Lower CI Upper DF ## (Intercept) 29.55 2.547 11.600 3.574e-24 24.52 34.57 202 ## lagdemocrat 29.13 3.845 7.577 1.250e-12 21.55 36.72 202 ## ## Multiple R-squared: 0.2177 , Adjusted R-squared: 0.2138 ## F-statistic: 57.41 on 1 and 202 DF, p-value: 1.25e-12 ``` --- ### Bias-Variance Tradeoff - As the window grows, bias increases due to including observations farther from the threshold. - But also variance declines because we have more observations available. --- ### RDD > Irrespective of the manner in which the bandwidth is chosen, one > should always investigate the sensitivity of the inferences to this > choice, for example, by including results for bandwidths twice (or > four times) and half (or a quarter of) the size of the originally > chosen bandwidth. Obviously, such bandwidth choices affect both > estimates and standard errors, but if the results are critically > dependent on a particular bandwidth choice, they are clearly less > credible than if they are robust to such variation in bandwidths. > (Imbens and Lemieux 2008) --- ### RDD - Green, Leong, Kern, Gerber, and Larimer find that an estimate of the optimal bandwidth proposed by Imbens and Kalyanaraman, in conjunction with local linear regression, helps RDD come very close to replicating experimental results. --- ``` r library(rdrobust) rddik <- with(lmb_data, rdrobust(score, lagdemvoteshare, c=0.5)) summary(rddik) ``` ``` ## Sharp RD estimates using local polynomial regression. ## ## Number of Obs. 13577 ## BW type mserd ## Kernel Triangular ## VCE method NN ## ## Number of Obs. 5670 7907 ## Eff. Number of Obs. 2207 1940 ## Order est. (p) 1 1 ## Order bias (q) 2 2 ## BW est. (h) 0.086 0.086 ## BW bias (b) 0.133 0.133 ## rho (h/b) 0.647 0.647 ## Unique Obs. 2878 3279 ## ## ===================================================================== ## Point Robust Inference ## Estimate z P>|z| [ 95% C.I. ] ## --------------------------------------------------------------------- ## RD Effect 18.666 9.053 0.000 [14.455 , 22.443] ## ===================================================================== ``` --- ``` r rdbw2 <- rdbwselect(lmb_data$score, lmb_data$lagdemvoteshare, c=0.5) summary(rdbw2) ``` ``` ## Call: rdbwselect ## ## Number of Obs. 13577 ## BW type mserd ## Kernel Triangular ## VCE method NN ## ## Number of Obs. 5670 7907 ## Order est. (p) 1 1 ## Order bias (q) 2 2 ## Unique Obs. 2878 3279 ## ## ======================================================= ## BW est. (h) BW bias (b) ## Left of c Right of c Left of c Right of c ## ======================================================= ## mserd 0.086 0.086 0.133 0.133 ## ======================================================= ``` --- ``` r lmb_subset <- lmb_data %>% filter(lagdemvoteshare>.41 & lagdemvoteshare<.59) lm_final <- lm_robust(score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") summary(lm_final) ``` ``` ## ## Call: ## lm_robust(formula = score ~ lagdemocrat, data = lmb_subset, se_type = "HC1") ## ## Standard error type: HC1 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) CI Lower CI Upper DF ## (Intercept) 28.35 0.5779 49.06 0.000e+00 27.21 29.48 4304 ## lagdemocrat 28.77 0.8610 33.41 7.265e-218 27.08 30.46 4304 ## ## Multiple R-squared: 0.2067 , Adjusted R-squared: 0.2066 ## F-statistic: 1117 on 1 and 4304 DF, p-value: < 2.2e-16 ``` --- ### Sharp vs. Fuzzy Regression Discontinuity - In a **sharp RDD**, treatment status is a deterministic function of the running variable: `$$D_i = \mathbf{1}(X_i \geq c)$$` --- ### Sharp vs. Fuzzy Regression Discontinuity - In a **fuzzy RDD**, the probability of treatment jumps at the cutoff, but not from 0 to 1: `$$\lim_{x \downarrow c} P(D=1|X=x) \neq \lim_{x \uparrow c} P(D=1|X=x)$$` but neither limit is necessarily 0 or 1. --- ### Sharp vs. Fuzzy Regression Discontinuity - Fuzzy designs arise naturally: - Imperfect compliance with treatment assignment. - Multiple treatments or program eligibility vs. take-up. - Measurement error in the running variable. --- ### Why Fuzzy RDD? - Example: A scholarship program awards aid to students with test scores above 70. *But* some students with scores below 70 appeal and receive aid; some with scores above 70 decline it. * Treatment (receiving aid) is not perfectly determined by the cutoff. --- ### Why Fuzzy RDD? - Example: Incumbency advantage (Lee 2008) is sharp if we define treatment as "winning the election." But if we define treatment as "serving as incumbent," and some winners resign or die, it becomes fuzzy. --- ### Why Fuzzy RDD? - Fuzzy RDD can still identify a causal effect, but for a specific subpopulation (compliers at the cutoff). --- <img src="images/Bertanha.png" width="70%" style="display: block; margin: auto;" /> --- ### Identification in Fuzzy RDD - The fuzzy RDD can be viewed as an **instrumental variables** setup: - **Instrument** `\(Z_i = \mathbf{1}(X_i \geq c)\)` (the cutoff indicator) - **Treatment** `\(D_i\)` (endogenous) - **Outcome** `\(Y_i\)` - **Running variable** `\(X_i\)` (controls, often including polynomials) --- ### Identification in Fuzzy RDD - Key assumptions (similar to IV): 1. **Relevance**: The cutoff strongly predicts treatment (first stage). 2. **Exclusion**: Crossing the cutoff affects `\(Y\)` only through `\(D\)` (no direct effect). 3. **Independence**: Potential outcomes and first stage are smooth at `\(c\)` (no manipulation). 4. **Monotonicity**: No defiers (compliance is one-sided or uniform). --- ### Identification in Fuzzy RDD - Under these, the fuzzy RD estimand identifies the **LATE for compliers at the cutoff**: `$$\tau_{fuzzy} = \frac{\lim_{x\downarrow c} E[Y|X=x] - \lim_{x\uparrow c} E[Y|X=x]}{\lim_{x\downarrow c} E[D|X=x] - \lim_{x\uparrow c} E[D|X=x]}$$` --- ### Estimation Approaches for Fuzzy RDD 1\. **Two-Stage Least Squares (2SLS)**: - First stage: `\(D_i = \gamma_0 + \gamma_1 Z_i + f(X_i) + \eta_i\)` - Second stage: `\(Y_i = \beta_0 + \beta_1 \hat{D}_i + g(X_i) + \epsilon_i\)` - `\(f(X_i)\)` and `\(g(X_i)\)` are flexible functions of `\(X\)` (e.g., polynomials, local linear). --- ### Estimation Approaches for Fuzzy RDD 2\. **Local Linear Wald Estimator** (preferred in modern RDD packages): - Estimate numerator and denominator using local linear regression on each side. - Bandwidth selection can be separate for outcome and treatment. --- ### Estimation Approaches for Fuzzy RDD 3\. **`rdrobust` with fuzzy option**: - The `rdrobust` package allows fuzzy estimation by supplying a treatment variable. --- ### Example: Coppock and Green, Voting Habits ``` r df_colorado <- read_csv("https://raw.githubusercontent.com/jnseawright/PS406/refs/heads/main/data/df_colorado.csv") # Visualize library(ggplot2) df_colorado %>% ggplot(aes(x = days_04, y = voted08, color = voted04)) + geom_point(alpha = 0.2) + geom_vline(xintercept = 0, linetype = "dashed") + labs(title = "Fuzzy RDD: Birthday in 2004 vs. Turnout in 2008", color = "Turnout in 2004") ``` <!-- --> --- ``` r with(df_colorado, rdrobust(y = voted08, x = days_04, c = 0, fuzzy = voted04)) %>% summary() ``` ``` ## Fuzzy RD estimates using local polynomial regression. ## ## Number of Obs. 7958 ## BW type mserd ## Kernel Triangular ## VCE method NN ## ## Number of Obs. 6145 1813 ## Eff. Number of Obs. 291 292 ## Order est. (p) 1 1 ## Order bias (q) 2 2 ## BW est. (h) 291.735 291.735 ## BW bias (b) 571.274 571.274 ## rho (h/b) 0.511 0.511 ## Unique Obs. 6145 1813 ## ## First-stage estimates. ## ## ===================================================================== ## Point Robust Inference ## Estimate z P>|z| [ 95% C.I. ] ## ===================================================================== ## Rd Effect -66.239 -31.734 0.000 [-69.728 , -61.615] ## ===================================================================== ## ## Treatment effect estimates. ## ## ===================================================================== ## Point Robust Inference ## Estimate z P>|z| [ 95% C.I. ] ## --------------------------------------------------------------------- ## RD Effect 0.185 5.800 0.000 [0.140 , 0.283] ## ===================================================================== ``` --- ### Reading the RDD Output: First Stage The first-stage RD effect is −66.239, which is obviously a large negative number. Why? The running variable days_04 measures days relative to the 18th birthday cutoff for the 2004 election, with larger values corresponding to younger individuals (born further after the cutoff date). Crossing from left to right therefore means going from just old enough to just too young to have voted in 2004. So the first stage is negative by construction: just to the right of the cutoff, 2004 turnout drops sharply — by about 66 percentage points among the effective observations near the threshold. --- ### Reading the RDD Output: Second Stage The treatment effect estimate of 0.185 is the fuzzy RD LATE: among compliers at the age-eligibility cutoff, voting in 2004 increases the probability of voting in 2008 by approximately 18.5 percentage points. This is the habit formation effect Coppock and Green are interested in. --- ### Visualizing RDD: Why Graphics Matter - Graphical presentation is **essential** in RDD: - Provides easy-to-communicate evidence of a discontinuity. - Reveals the functional form (linear, quadratic, etc.). - Helps detect anomalies (outliers, heaping, manipulation). --- ### Visualizing RDD: Choices - Key elements of an RDD plot: 1. **Binned averages** of the outcome (to reduce noise). 2. **Smoothed fits** (polynomial or local linear) on each side. 3. A vertical line at the cutoff. - Always show the raw data (or binned means) *and* the fitted lines. --- ### Constructing Bins: Why? - Plotting every observation often results in overplotting and obscures the pattern. - Solution: group the running variable into bins and plot the **mean outcome** within each bin. --- ### Constructing Bins: Choosing Size - How many bins? Too few → miss curvature; too many → noisy. - Modern approach: **data-driven bin selection** that mimics the variance of the data (e.g., mimicking variance criterion, IMSE-optimal binning). - The `rdrobust` package provides an excellent function: `rdplot()`. --- ``` r library(rdrobust) # Basic rdplot with default bin selection rdplot(y = lmb_data$score, x = lmb_data$lagdemvoteshare, c = 0.5, title = "RD Plot: Incumbency Advantage", x.label = "Lag Democrat Vote Share", y.label = "Next Election Score") ``` ``` ## [1] "Mass points detected in the running variable." ``` <img src="5regressiondiscontinuitydesigns_files/figure-html/rdplot_example-1.png" width="90%" /> --- ``` ## [1] "Mass points detected in the running variable." ``` <img src="5regressiondiscontinuitydesigns_files/figure-html/rdplot_example2-1.png" width="90%" /> --- ### "Mass Points Detected": What Does This Mean? The warning "Mass points detected in the running variable" means that many observations share identical values of the running variable. Here, this happens because vote shares are recorded as rounded percentages, producing heaping at values like 0.50, 0.51, 0.52, etc. - Generally, we assume the running variable is continuous and smooth, so this points toward a violation of the setup, although not all violations are created equal. --- **Practical guidance**: When you see this warning, ask whether the heaping is mechanical (rounding, as here) or behavioral (units sorting to specific values to cross the threshold). Mechanical heaping is generally benign for the continuity assumption. Behavioral heaping (e.g., students retaking a test until they score exactly 70) is a sign of manipulation and a genuine threat to identification. --- ### Constructing Bins: Automation * rdplot automatically chooses bin widths to balance precision and flexibility. * The plot shows binned means (dots) and a polynomial fit (lines) on each side. * Look for a clear jump at the cutoff (x = 0.5). --- ### Customizing Bins: Manual Control * Sometimes you may want to experiment with bin sizes to check sensitivity. --- ``` r # Create 50 equal-sized bins lmb_data <- lmb_data %>% mutate(bin = cut(lagdemvoteshare, breaks = 50, labels = FALSE)) bin_means <- lmb_data %>% group_by(bin) %>% summarise( x = mean(lagdemvoteshare, na.rm = TRUE), y = mean(score, na.rm = TRUE), .groups = "drop" ) quadfitplot <- ggplot(bin_means, aes(x = x, y = y)) + geom_point() + geom_vline(xintercept = 0.5, linetype = "dashed") + stat_smooth(data = subset(lmb_data, lagdemvoteshare < 0.5), aes(lagdemvoteshare, score), method = "lm", formula = y ~ poly(x, 2)) + stat_smooth(data = subset(lmb_data, lagdemvoteshare >= 0.5), aes(lagdemvoteshare, score), method = "lm", formula = y ~ poly(x, 2)) + labs(x = "Lag Democrat Vote Share", y = "Next Election Score", title = "Manual Bins with Quadratic Fits") ``` --- <img src="5regressiondiscontinuitydesigns_files/figure-html/quadfitplot-1.png" width="90%" /> --- ### Customizing Bins: Conclusions * Here we use 50 bins and overlay separate quadratic fits. * Notice how the bin means are less noisy than raw data. * The quadratic fits capture curvature but may be sensitive to outliers. --- ### What to Look For in an RDD Plot * Discontinuity at the cutoff: Is there a visible jump? Is it large relative to the noise? * Smoothness on each side: Do the binned means follow a smooth trend? If not, maybe the polynomial order is wrong. * Boundary behavior: Are there outliers or gaps right at the cutoff? (Could indicate manipulation.) --- ### What to Look For in an RDD Plot * Consistency across bin widths: Does the jump persist if you change bin size? * Comparison with global fit: Does a global polynomial (e.g., quadratic) match the local pattern? * Always include a plot in any RDD paper; it's often the most convincing evidence. --- ### Common Pitfalls in RD Graphics * Too few bins: Hides curvature, may create false discontinuity. * Too many bins: Noisy, hard to see pattern. * Ignoring binning: Raw data scatterplots are uninterpretable with large N. --- ### Common Pitfalls in RD Graphics * Overfitting: Using high-order polynomials that wiggle unrealistically. * Not showing confidence intervals: Binned means have sampling error; consider adding error bars (though often omitted for clarity). * The rdplot function can add confidence intervals with the ci option: ``` r rdplot(..., ci = 95) ``` --- ### Estimation Methods in RDD: Three Approaches 1. **Local Randomization View**: Treatment is "as good as randomly assigned" in a narrow window around the cutoff. *Simple difference in means*. 2. **Global Polynomials**: Fit a flexible function (e.g., quadratic, cubic) to all data, with a discontinuity indicator. *Flexible but sensitive*. 3. **Local Linear Regression**: Fit separate linear regressions on each side of the cutoff, weighting observations near the cutoff. *Preferred method (Imbens & Lemieux 2008); reduces boundary bias*. --- ### Estimation Methods in RDD: Three Approaches We'll illustrate each using the Lee (2008) incumbency data. --- ### 1. Local Randomization View - Idea: If we restrict to a very narrow bandwidth around the cutoff, units are nearly identical except for treatment status. - This mimics a randomized experiment: for units with `\(X \in [c-h, c+h]\)`, treatment is "as good as randomly assigned." - Estimator: simple difference in means (or regression with no polynomial). --- ### 1. Local Randomization View ``` r library(estimatr) # Very narrow window: ±0.02 around cutoff (48% to 52%) lmb_narrow <- lmb_data %>% filter(lagdemvoteshare >= 0.48 & lagdemvoteshare <= 0.52) # Simple difference in means lm_narrow <- lm_robust(score ~ I(lagdemocrat>0.5), data = lmb_narrow, se_type = "HC1") summary(lm_narrow) ``` ``` ## ## Call: ## lm_robust(formula = score ~ I(lagdemocrat > 0.5), data = lmb_narrow, ## se_type = "HC1") ## ## Standard error type: HC1 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) CI Lower ## (Intercept) 31.20 1.334 23.39 3.788e-95 28.58 ## I(lagdemocrat > 0.5)TRUE 21.28 1.951 10.91 3.987e-26 17.45 ## CI Upper DF ## (Intercept) 33.81 913 ## I(lagdemocrat > 0.5)TRUE 25.11 913 ## ## Multiple R-squared: 0.1152 , Adjusted R-squared: 0.1142 ## F-statistic: 119 on 1 and 913 DF, p-value: < 2.2e-16 ``` --- ### 1. Local Randomization View - Estimate: 21.284 with SE 1.951. - This is intuitive but: - Comparatively small sample (915 observations). - Sensitive to exact window choice. - May still have bias if the true function has slope within the window. --- ### 2. Global Polynomial Regression - Use all data, fit a polynomial in the running variable separately on each side of the cutoff: `$$\tiny Y_i = \alpha + \tau D_i + \beta_1 X_i + \beta_2 X_i^2 + \cdots + \gamma_1 D_i X_i + \gamma_2 D_i X_i^2 + \cdots + \epsilon_i$$` - `\(\tau\)` is the treatment effect (discontinuity). --- ### 2. Global Polynomial Regression - Advantages: uses all data, simple to implement. - Disadvantages: - **Sensitive to polynomial order** (linear, quadratic, cubic?). - Data far from cutoff can influence the estimate at the cutoff. - High-order polynomials can overfit and produce erratic estimates. --- ``` r # Compare different polynomial orders library(modelsummary) lmb_data$centeredlagdem <- lmb_data$lagdemvoteshare - 0.5 # Linear (order 1) rd_linear <- lm(score ~ lagdemocrat * centeredlagdem, data = lmb_data) # Quadratic (order 2) rd_quad <- lm(score ~ lagdemocrat * (centeredlagdem + I(centeredlagdem^2)), data = lmb_data) # Cubic (order 3) rd_cubic <- lm(score ~ lagdemocrat * (centeredlagdem + I(centeredlagdem^2) + I(centeredlagdem^3)), data = lmb_data) # Compare coefficients on lagdemocrat (the treatment effect) models <- list("Linear" = rd_linear, "Quadratic" = rd_quad, "Cubic" = rd_cubic) ``` --- ``` r modelsummary(models, coef_map = c("lagdemocrat" = "Treatment Effect"), gof_map = c("nobs", "r.squared"), output = "kableExtra") ``` <table class="table" style="width: auto !important; margin-left: auto; margin-right: auto;"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:center;"> Linear </th> <th style="text-align:center;"> Quadratic </th> <th style="text-align:center;"> Cubic </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> Treatment Effect </td> <td style="text-align:center;"> 37.695 </td> <td style="text-align:center;"> 18.072 </td> <td style="text-align:center;"> 21.428 </td> </tr> <tr> <td style="text-align:left;box-shadow: 0px 1.5px"> </td> <td style="text-align:center;box-shadow: 0px 1.5px"> (0.803) </td> <td style="text-align:center;box-shadow: 0px 1.5px"> (1.133) </td> <td style="text-align:center;box-shadow: 0px 1.5px"> (1.538) </td> </tr> <tr> <td style="text-align:left;"> Num.Obs. </td> <td style="text-align:center;"> 13577 </td> <td style="text-align:center;"> 13577 </td> <td style="text-align:center;"> 13577 </td> </tr> <tr> <td style="text-align:left;"> R2 </td> <td style="text-align:center;"> 0.268 </td> <td style="text-align:center;"> 0.306 </td> <td style="text-align:center;"> 0.310 </td> </tr> </tbody> </table> --- ### 2. Global Polynomial Regression - The treatment effect estimate varies: 37.695 (linear), 18.072 (quadratic), 21.428 (cubic). - Which is correct? We don't know—this sensitivity is concerning. --- ### Why Global Polynomials Can Mislead <img src="5regressiondiscontinuitydesigns_files/figure-html/poly_plot-1.png" width="90%" /> --- ### Why Global Polynomials Can Mislead - Notice how the quadratic fit curves away from the data near the cutoff? That's because it's influenced by points far away. - The linear fit may be too rigid if the true relationship is curved. --- ### 3. Local Linear Regression (Preferred) - Idea: Use only data within a bandwidth `\(h\)` of the cutoff, but fit a **linear** regression on each side, weighting observations by their distance from the cutoff (kernel weighting). - This reduces **boundary bias**: near the cutoff, a local linear model approximates the true function better than a global polynomial. --- ### What Local Linear Regression Is - Estimator (for a given bandwidth `\(h\)`): 1. For observations with `\(X_i \in [c-h, c+h]\)`, fit: `$$Y_i = \alpha_l + \beta_l (X_i - c) + \epsilon_i \quad \text{for } X_i < c$$` `$$Y_i = \alpha_r + \beta_r (X_i - c) + \epsilon_i \quad \text{for } X_i \ge c$$` 2. The treatment effect is `\(\hat{\tau} = \hat{\alpha}_r - \hat{\alpha}_l\)`. - Modern packages (e.g., `rdrobust`) use optimal bandwidth selection and bias correction. --- ### Local Linear with `rdrobust` ``` r library(rdrobust) # Local linear regression with default optimal bandwidth rd_ll <- rdrobust(y = lmb_data$score, x = lmb_data$lagdemvoteshare, c = 0.5, kernel = "triangular") ``` --- ``` ## Sharp RD estimates using local polynomial regression. ## ## Number of Obs. 13577 ## BW type mserd ## Kernel Triangular ## VCE method NN ## ## Number of Obs. 5670 7907 ## Eff. Number of Obs. 2207 1940 ## Order est. (p) 1 1 ## Order bias (q) 2 2 ## BW est. (h) 0.086 0.086 ## BW bias (b) 0.133 0.133 ## rho (h/b) 0.647 0.647 ## Unique Obs. 2878 3279 ## ## ===================================================================== ## Point Robust Inference ## Estimate z P>|z| [ 95% C.I. ] ## --------------------------------------------------------------------- ## RD Effect 18.666 9.053 0.000 [14.455 , 22.443] ## ===================================================================== ``` --- - The output shows: - **Conventional** estimates (uncorrected for bias). - **Bias-corrected** estimates (adjusts for remaining bias within bandwidth). - **Robust** confidence intervals (valid even with bias correction). --- - Key numbers: - Optimal bandwidth (left/right): 0.086 / 0.086 - Treatment effect (robust): 18.449 [CI: 14.455, 22.443] --- ### Why Local Linear is Preferred: Boundary Bias <img src="5regressiondiscontinuitydesigns_files/figure-html/boundary_bias-1.png" width="80%" /> --- ### Why Local Linear is Preferred: Boundary Bias - At the boundary (cutoff), a global linear fit (red) can be badly biased if the true function (black) is curved. - Local linear (not shown) fits only near the cutoff and adapts to local curvature. --- ### Next Steps: Diagnostics - After estimating the discontinuity, we must validate the design: - **Covariate balance**: Are pre-treatment characteristics smooth at the cutoff? - **Manipulation testing**: Is there evidence of sorting around the cutoff? - **Placebo cutoffs**: Do we see effects at other points? - **Bandwidth sensitivity**: Do results hold for different bandwidths? --- ### 1. Covariate Balance - If the RDD is valid, covariates that were determined **before** the treatment should not jump at the cutoff. - Idea: Run the same RD analysis on each covariate as the outcome. --- - Covariates in the Lee data: - `lagdemocrat`: whether Democrat won previous election (the treatment itself—should jump! Not a balance test.) - `score`: outcome (should jump) - Need other covariates: We have lags of tons of other variables. We will use an arbitrary one ("collegeattend") for this example. --- ``` r #Test if this covariate jumps at the cutoff library(rdrobust) rd_cov <- rdrobust(y = lmb_data$collegeattend, x = lmb_data$lagdemvoteshare, c = 0.5) summary(rd_cov) ``` ``` ## Sharp RD estimates using local polynomial regression. ## ## Number of Obs. 13428 ## BW type mserd ## Kernel Triangular ## VCE method NN ## ## Number of Obs. 5587 7841 ## Eff. Number of Obs. 1658 1549 ## Order est. (p) 1 1 ## Order bias (q) 2 2 ## BW est. (h) 0.067 0.067 ## BW bias (b) 0.120 0.120 ## rho (h/b) 0.563 0.563 ## Unique Obs. 2836 3250 ## ## ===================================================================== ## Point Robust Inference ## Estimate z P>|z| [ 95% C.I. ] ## --------------------------------------------------------------------- ## RD Effect 0.127 1.889 0.059 [-0.006 , 0.308] ## ===================================================================== ``` --- - If the estimate is small and not significant (robust `\((p > 0.05)\)`), then covariate is smooth. - The results here are borderline, and probably should raise some concerns. - If there is demographic sorting in terms of which kinds of districts just barely go to each party, we may be better off with a different research design. --- - In practice, test all available pre-treatment covariates and report a table. Think about multiple comparisons (remember, e.g., Benjamini-Hochberg from your regression class!). - If any covariate shows a discontinuity, the design is suspect. --- ### 2. Manipulation Testing: McCrary Density Test - If units can precisely manipulate the running variable, we may see a pile‑up of observations just above (or below) the cutoff. - The **McCrary (2008) density test** checks for a discontinuity in the density of the running variable at the cutoff. - Implementation in R: `rddensity` package (Cattaneo, Jansson, Ma). --- ``` r library(rddensity) density_test <- rddensity(lmb_data$lagdemvoteshare, c = 0.5) summary(density_test) ``` ``` ## ## Manipulation testing using local polynomial density estimation. ## ## Number of obs = 13577 ## Model = unrestricted ## Kernel = triangular ## BW method = estimated ## VCE method = jackknife ## ## c = 0.5 Left of c Right of c ## Number of obs 5670 7907 ## Eff. Number of obs 1856 2161 ## Order est. (p) 2 2 ## Order bias (q) 3 3 ## BW est. (h) 0.073 0.096 ## ## Method T P > |T| ## Robust 0.6834 0.4944 ``` ``` ## ## P-values of binomial tests (H0: p=0.5). ## ## Window Length / 2 <c >=c P>|T| ## 0.001 21 23 0.8804 ## 0.002 55 49 0.6241 ## 0.004 72 80 0.5703 ## 0.005 89 100 0.4671 ## 0.006 112 124 0.4740 ## 0.007 141 156 0.4166 ## 0.008 166 195 0.1405 ## 0.009 192 223 0.1408 ## 0.011 228 231 0.9256 ## 0.012 276 255 0.3855 ``` ``` r # Plot the density with confidence intervals density_plot <- rdplotdensity(density_test, lmb_data$lagdemvoteshare) ``` <!-- --> --- ``` ## ## Manipulation testing using local polynomial density estimation. ## ## Number of obs = 13577 ## Model = unrestricted ## Kernel = triangular ## BW method = estimated ## VCE method = jackknife ## ## c = 0.5 Left of c Right of c ## Number of obs 5670 7907 ## Eff. Number of obs 1856 2161 ## Order est. (p) 2 2 ## Order bias (q) 3 3 ## BW est. (h) 0.073 0.096 ## ## Method T P > |T| ## Robust 0.6834 0.4944 ``` ``` ## ## P-values of binomial tests (H0: p=0.5). ## ## Window Length / 2 <c >=c P>|T| ## 0.001 21 23 0.8804 ## 0.002 55 49 0.6241 ## 0.004 72 80 0.5703 ## 0.005 89 100 0.4671 ## 0.006 112 124 0.4740 ## 0.007 141 156 0.4166 ## 0.008 166 195 0.1405 ## 0.009 192 223 0.1408 ## 0.011 228 231 0.9256 ## 0.012 276 255 0.3855 ``` --- ``` ## $Estl ## Call: lpdensity ## ## Sample size 5670 ## Polynomial order for point estimation (p=) 2 ## Order of derivative estimated (v=) 1 ## Polynomial order for confidence interval (q=) 3 ## Kernel function triangular ## Scaling factor 0.417648791985857 ## Bandwidth method user provided ## ## Use summary(...) to show estimates. ## ## $Estr ## Call: lpdensity ## ## Sample size 7907 ## Polynomial order for point estimation (p=) 2 ## Order of derivative estimated (v=) 1 ## Polynomial order for confidence interval (q=) 3 ## Kernel function triangular ## Scaling factor 0.582424867413082 ## Bandwidth method user provided ## ## Use summary(...) to show estimates. ## ## $Estplot ``` <!-- --> --- - The test statistic is 0.683 with p-value 0.4944. - A non-significant p-value (p > 0.05) suggests no evidence of manipulation. - **Interpretation**: If significant, units may be sorting around the cutoff, violating continuity. --- ### 3. Placebo Cutoffs - Test for discontinuities at points where **no treatment should occur**. - Choose fake cutoffs and run the same RD estimation. - Ideally, we should see **no significant effects** at these placebo cutoffs. --- ``` r # Run placebo tests at cutoffs spanning the range, excluding the true cutoff placebo_cutoffs <- seq(0.3, 0.7, by = 0.05) placebo_cutoffs <- placebo_cutoffs[placebo_cutoffs != 0.5] placebo_results <- data.frame( cutoff = placebo_cutoffs, est = NA, ci_low = NA, ci_high = NA, p = NA ) for (i in seq_along(placebo_cutoffs)) { rd_tmp <- rdrobust(lmb_data$score, lmb_data$lagdemvoteshare, c = placebo_cutoffs[i]) placebo_results[i, "est"] <- rd_tmp$coef["Robust",] placebo_results[i, "ci_low"] <- rd_tmp$ci[3, 1] placebo_results[i, "ci_high"] <- rd_tmp$ci[3, 2] placebo_results[i, "p"] <- rd_tmp$pv[3] } # Add the true cutoff estimate for visual reference true_est <- rd_ll$coef["Robust",] true_ci_low <- rd_ll$ci[3, 1] true_ci_high <- rd_ll$ci[3, 2] placeboplot <- ggplot(placebo_results, aes(x = cutoff, y = est)) + geom_hline(yintercept = 0, linetype = "dotted", color = "grey50") + geom_errorbar(aes(ymin = ci_low, ymax = ci_high), width = 0.01, color = "steelblue") + geom_point(color = "steelblue", size = 2) + # True cutoff shown separately in red geom_errorbar(aes(x = 0.5, ymin = true_ci_low, ymax = true_ci_high), width = 0.01, color = "firebrick") + geom_point(aes(x = 0.5, y = true_est), color = "firebrick", size = 2) + annotate("text", x = 0.5, y = true_ci_high + 2, label = "True cutoff", color = "firebrick", size = 3.5) + labs(x = "Placebo Cutoff", y = "Estimated Treatment Effect (Robust)", title = "Placebo Cutoff Tests") + theme_minimal() ``` --- <!-- --> --- - If any placebo cutoff shows a significant effect, it suggests the outcome is discontinuous for reasons other than the treatment—perhaps due to model misspecification or other factors. - Also test cutoffs on either side, and consider using multiple placebos (e.g., every 0.05 increment) and plotting the results. --- ### 4. Bandwidth Sensitivity - The choice of bandwidth affects the estimate and its precision. - It is essential to show that results are not driven by a particular bandwidth. - Common approach: estimate the treatment effect for a range of bandwidths (e.g., from 0.5× to 2× the optimal bandwidth) and plot the estimates with confidence intervals. --- ``` r # Get optimal bandwidth from rdrobust bw_opt <- rd_ll$bws[1,1] # use left bandwidth as reference # Define bandwidth multipliers mult <- seq(0.5, 2, by = 0.1) results <- data.frame(mult = mult, est = NA, ci_low = NA, ci_high = NA) for (i in seq_along(mult)) { rd_tmp <- rdrobust(lmb_data$score, lmb_data$lagdemvoteshare, c = 0.5, h = bw_opt * mult[i]) results[i, "est"] <- rd_tmp$coef["Robust",] results[i, "ci_low"] <- rd_tmp$ci[3, 1] results[i, "ci_high"] <- rd_tmp$ci[3, 2] } # Plot bwsensplot <- ggplot(results, aes(x = mult, y = est)) + geom_hline(yintercept = 0, linetype = "dotted") + geom_ribbon(aes(ymin = ci_low, ymax = ci_high), alpha = 0.2) + geom_line() + geom_point() + labs(x = "Bandwidth Multiplier (× optimal)", y = "Treatment Effect (Robust)", title = "Bandwidth Sensitivity Analysis") ``` --- <img src="5regressiondiscontinuitydesigns_files/figure-html/bw_sensplot-1.png" width="90%" /> --- - The plot shows how the estimate changes with bandwidth. - Ideally, the estimate remains relatively stable across a reasonable range, and confidence intervals overlap. - If the estimate flips sign or changes dramatically, the results are fragile. --- ### Reporting RDD Results: Tables and Figures - A credible RDD paper presents results transparently, allowing readers to assess the design and robustness. - Key elements: 1. **Main RD plot** (binned means + fits) – already covered. 2. **Main results table** – estimates with different specifications. 3. **Robustness tables/figures** – bandwidth sensitivity, placebo cutoffs. 4. **Diagnostics table** – covariate balance, density test. --- ### 1. The Main RD Plot (Review) - Always start with a clear graphical display. ``` r library(rdrobust) library(ggplot2) # rdplot with default options rdplot(y = lmb_data$score, x = lmb_data$lagdemvoteshare, c = 0.5, title = "Incumbency Advantage in U.S. House Elections", x.label = "Democratic Vote Share at t", y.label = "Probability of Democratic Win at t+1") ``` ``` ## [1] "Mass points detected in the running variable." ``` <img src="5regressiondiscontinuitydesigns_files/figure-html/main_plot-1.png" width="90%" /> --- ``` ## [1] "Mass points detected in the running variable." ``` <img src="5regressiondiscontinuitydesigns_files/figure-html/main_plot2-1.png" width="90%" /> --- ### 2. Main Results Table: Multiple Specifications - Report estimates for: - Different bandwidths (e.g., optimal, half, double). - Different polynomial orders (local linear vs. local quadratic). - With and without covariates. - Use `rdrobust` to obtain estimates, then combine into a table. --- ``` r # Estimate for optimal bandwidth (local linear) rd_opt <- rdrobust(lmb_data$score, lmb_data$lagdemvoteshare, c = 0.5) # Half bandwidth rd_half <- rdrobust(lmb_data$score, lmb_data$lagdemvoteshare, c = 0.5, h = rd_opt$bws[1,1] / 2) # Double bandwidth rd_double <- rdrobust(lmb_data$score, lmb_data$lagdemvoteshare, c = 0.5, h = rd_opt$bws[1,1] * 2) # Local quadratic (order = 2) rd_quad <- rdrobust(lmb_data$score, lmb_data$lagdemvoteshare, c = 0.5, p = 2) # Extract key statistics into a data frame results_df <- data.frame( Specification = c("Optimal BW (linear)", "Half BW", "Double BW", "Optimal BW (quadratic)"), Estimate = round(c(rd_opt$coef["Robust",], rd_half$coef["Robust",], rd_double$coef["Robust",], rd_quad$coef["Robust",]), 3), SE = round(c(rd_opt$se[3], rd_half$se[3], rd_double$se[3], rd_quad$se[3]), 3), CI_low = round(c(rd_opt$ci[3,1], rd_half$ci[3,1], rd_double$ci[3,1], rd_quad$ci[3,1]), 3), CI_high = round(c(rd_opt$ci[3,2], rd_half$ci[3,2], rd_double$ci[3,2], rd_quad$ci[3,2]), 3) ) ``` --- ``` r knitr::kable(results_df, caption = "RDD Estimates of Incumbency Advantage") ``` Table: RDD Estimates of Incumbency Advantage |Specification | Estimate| SE| CI_low| CI_high| |:----------------------|--------:|-----:|------:|-------:| |Optimal BW (linear) | 18.449| 2.038| 14.455| 22.443| |Half BW | 27.463| 3.711| 20.189| 34.737| |Double BW | 17.688| 1.753| 14.252| 21.123| |Optimal BW (quadratic) | 24.545| 2.794| 19.070| 30.021| --- - Always report robust (bias-corrected) estimates and confidence intervals. - Include effective sample sizes on each side. --- ### 3. Include Diagnostic Results - The plots from the diagnostics section should be included in your paper or appendix. --- ### How Reliable Are RDD Applications? Stommes, Aronow, and Savje analyze the 45 most prominent RDDs published in political science. They find that there are often poor analytic methods and low power given sample sizes. --- <img src="images/Stommes1.png" width="70%" style="display: block; margin: auto;" /> --- <img src="images/Stommes2.png" width="70%" style="display: block; margin: auto;" /> --- <img src="images/Stommes3.png" width="70%" style="display: block; margin: auto;" /> --- ### Implications for Practice - If most RDD applications in political sciene have very low power, what does this mean for the credibility of effect estimates? - What does it mean that p values are clustered just above 0.05? - What do these findings imply for our potential use of RDD in our own research? --- ## RDD Best Practices - **Design**: RDD is most credible when the cutoff is sharp, non‑manipulable, and not chosen by the units themselves. - **Visualization first**: Always plot binned means + local linear fits. Let the graph tell the story before reaching for tables. - **Prefer local linear over global polynomials**: It reduces boundary bias and avoids overfitting far from the cutoff. - **Use robust inference**: Report bias‑corrected estimates with robust standard errors (e.g., `rdrobust`’s “Robust” output). --- - **Let the data choose the bandwidth** (IK or CCT), then test sensitivity: try half, double, and a range of multipliers. - **Diagnose rigorously**: - *Density test* (`rddensity`): check for manipulation / sorting. - *Covariate balance*: pre‑treatment characteristics should be smooth at the cutoff. - *Placebo cutoffs*: show that discontinuities appear only at the true threshold. - **Be transparent about power**: RDD often needs large samples near the cutoff; small effective N may yield noisy, fragile estimates. - **Report everything**—not just the preferred specification. A table with multiple bandwidths and polynomial orders builds credibility.