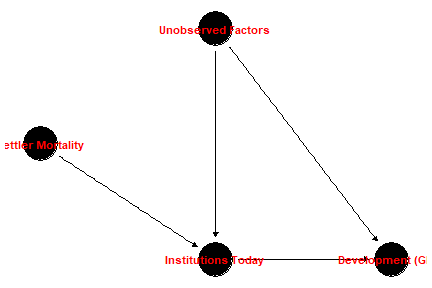

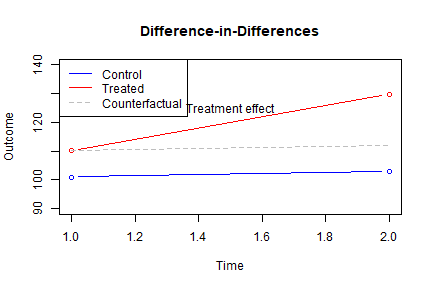

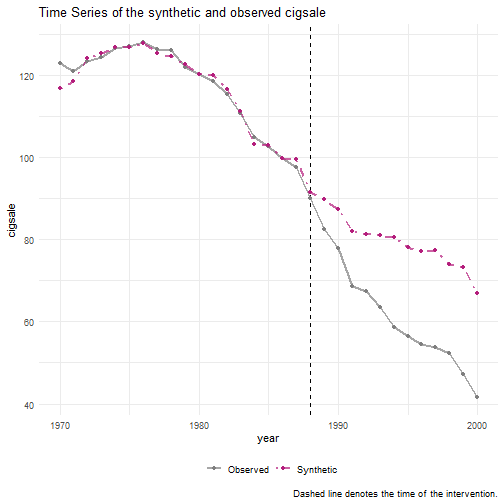

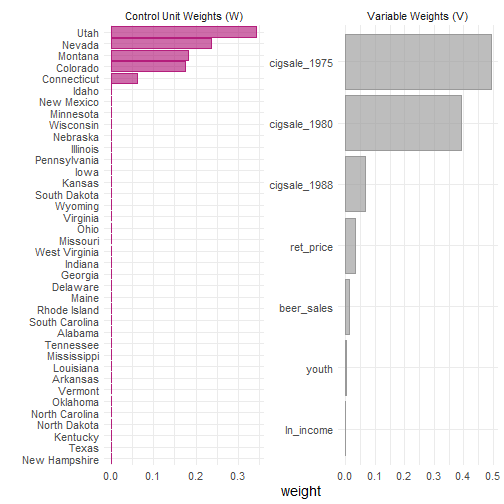

class: center, middle, inverse, title-slide .title[ # 3: Natural Experiments: Conceptual Introduction ] .subtitle[ ## Quantitative Causal Inference ] .author[ ### <large>Jaye Seawright</large> ] .institute[ ### <small>Northwestern Political Science</small> ] .date[ ### April 16 and 21, 2026 ] --- class: center, middle <style type="text/css"> pre { max-height: 400px; overflow-y: auto; } pre[class] { max-height: 200px; } </style> ### What Is a Natural Experiment? - A **natural experiment** is an observational study in which the treatment (or exposure) of interest is assigned by **factors outside the researcher’s control**, in a way that mimics random assignment. - The researcher does **not** manipulate the treatment – it occurs naturally, due to: - Policy changes, - Geographic or historical circumstances, - Random shocks (weather, lotteries, etc.), - Institutional rules (e.g., cutoffs). --- ### Natural Experiments vs. True Experiments | Feature | True Experiment | Natural Experiment | |--------|-----------------|---------------------| | Treatment assigned by | Researcher | Nature, policy, accident | | Randomization | Controlled by researcher | "As‑if random" – must be argued | | Setting | Often lab or field | Real‑world | --- ### Natural Experiments vs. True Experiments | Feature | True Experiment | Natural Experiment | |--------|-----------------|---------------------| | Internal validity | High (by design) | Requires strong assumptions | | External validity | May be limited | Often high (real‑world context) | --- - In a true experiment, randomization ensures that treatment and control groups are comparable **on average**. - In a natural experiment, we must **defend** the claim that treatment is as‑if randomly assigned. --- ### Key Features of a Natural Experiment 1. **Exogenous variation**: The source of variation in treatment is unrelated to potential outcomes (conditional on observables). 2. **No researcher manipulation**: The treatment arises from real‑world processes – policy discontinuities, weather, lotteries, historical events. 3. **Real‑world context**: Results often generalize better than lab experiments, but assumptions are harder to verify. --- ### Why Are Natural Experiments Valuable? - **Ethics**: We cannot randomly assign people to war, colonization, or unemployment. - **Feasibility**: Many interesting treatments (e.g., living in a democracy) are impossible to randomize. - **External validity**: Natural experiments study real populations in real settings. - They provide a bridge between observational studies and experiments, using **design** rather than statistical adjustment to identify causal effects. --- ### The Core Assumption: As‑If Random The central claim of any natural experiment is that treatment assignment is **"as‑if random"** – i.e., independent of potential outcomes, at least after conditioning on observable covariates. This assumption is **not testable directly**; we can only provide indirect evidence: - Balance tests (compare covariates across treatment and control). - Placebo tests (check for effects where none should exist). - Institutional knowledge (explain why assignment is haphazard). --- ### Five Types of Natural Experiments We will explore five common designs: 1. **Classic natural experiment**: A single treatment is as‑if randomly assigned (e.g., Snow’s cholera study, draft lottery). 2. **Instrumental‑variables natural experiment**: An as‑if random variable affects treatment, but not outcome directly (e.g., settler mortality as instrument for institutions). 3. **Regression‑discontinuity design**: Treatment is determined by a cutoff on a continuous variable (e.g., Maimonides' rule). --- ### Five Types of Natural Experiments 4\. **Difference-in-differences design**: treatment changes at unpredictable or meaningless times in some units but not others (e.g., minimum-wage study) 5\. **Synthetic-control design**: weight control cases to estimate the trajectory over time of the treatment case if it were in the control condition (e.g., Brexit study) --- ### Classic Natural Experiment 1. "Nature" randomizes the treatment. - No discretion is involved in assigning treatments, or the relevant information is unavailable or unused. 2. Randomized treatment has the same effect as non-randomized treatment would have. --- ### Snow: the Most Famous Natural Experiment <img src="images/jonsnow.png" width="100%" style="display: block; margin: auto;" /> --- ### Snow: the Most Famous Natural Experiment <img src="images/johnsnow.png" width="100%" style="display: block; margin: auto;" /> --- ### Snow: the Most Famous Natural Experiment <img src="images/snowpubandpump.png" width="50%" style="display: block; margin: auto;" /> --- ### Snow on Cholera <img src="images/snowcrossdistrict.jpg" width="40%" style="display: block; margin: auto;" /> --- ''Although the facts shown in the above table afford very strong evidence of the powerful influence which the drinking of water containing the sewage of a town exerts over the spread of cholera, when that disease is present, yet the question does not end here; for the intermixing of the water supply of the Southwark and Vauxhall Company with that of the Lambeth Company, over an extensive part of London, admitted of the subject being sifted in such a way as to yield the most incontrovertible proof on one side or the other.'' --- ''In the sub-districts enumerated in the above table as being supplied by both Companies, the mixing of the supply is of the most intimate kind. The pipes of each Company go down all the streets, and into nearly all the courts and alleys. A few houses are supplied by one Company and a few by the other, according to the decision of the owner or occupier at that time when the Water Companies were in active competition. In many cases a single house has a supply different from that on either side. Each company supplies both rich and poor, both large houses and small; there is no difference either in the condition or occupation of the persons receiving the water of the different Companies.'' --- <img src="images/snowwithindistrict.jpg" width="40%" style="display: block; margin: auto;" /> --- ``` r snowtable8 <- read_csv("https://github.com/jnseawright/PS406/raw/main/data/snowtable8.csv") snowlm <- lm(deathsOverall ~ supplier, data=snowtable8) ``` --- ``` r summary(snowlm) ``` ``` ## ## Call: ## lm(formula = deathsOverall ~ supplier, data = snowtable8) ## ## Residuals: ## Min 1Q Median 3Q Max ## -69.333 -16.896 -1.625 16.812 54.667 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 4.50 14.93 0.302 0.765175 ## supplierSouthwarkVauxhall 65.83 17.23 3.820 0.000651 *** ## supplierSouthwarkVauxhall_Lambeth 36.25 16.69 2.172 0.038139 * ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 29.85 on 29 degrees of freedom ## Multiple R-squared: 0.3575, Adjusted R-squared: 0.3132 ## F-statistic: 8.07 on 2 and 29 DF, p-value: 0.001636 ``` --- ``` r snowlm2 <- lm(I(log(pop1851))~ supplier, data=snowtable8) summary(snowlm2) ``` ``` ## ## Call: ## lm(formula = I(log(pop1851)) ~ supplier, data = snowtable8) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.90380 -0.16029 -0.00353 0.28874 0.80617 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 8.3014 0.1886 44.019 < 2e-16 *** ## supplierSouthwarkVauxhall 1.1731 0.2178 5.387 8.65e-06 *** ## supplierSouthwarkVauxhall_Lambeth 1.5134 0.2108 7.178 6.70e-08 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.3772 on 29 degrees of freedom ## Multiple R-squared: 0.6402, Adjusted R-squared: 0.6153 ## F-statistic: 25.8 on 2 and 29 DF, p-value: 3.661e-07 ``` --- ### Vietnam War Draft Lottery --- <img src="images/draftrandom.JPG" width="100%" style="display: block; margin: auto;" /> --- ``` r draft1970 <- read_csv("https://github.com/jnseawright/PS406/raw/main/data/draft1970.csv") ``` --- ``` r boxplot(rank~month, data=draft1970) ``` <img src="3naturalexperiments_files/figure-html/unnamed-chunk-12-1.png" width="70%" style="display: block; margin: auto;" /> --- ``` r draftlm <- lm(rank ~ day, data=draft1970) ``` --- ``` r summ(draftlm) ``` <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> Observations </td> <td style="text-align:right;"> 366 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Dependent variable </td> <td style="text-align:right;"> rank </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Type </td> <td style="text-align:right;"> OLS linear regression </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> F(1,364) </td> <td style="text-align:right;"> 19.54 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> R² </td> <td style="text-align:right;"> 0.05 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Adj. R² </td> <td style="text-align:right;"> 0.05 </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;border-bottom: 0;"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:right;"> Est. </th> <th style="text-align:right;"> S.E. </th> <th style="text-align:right;"> t val. </th> <th style="text-align:right;"> p </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> (Intercept) </td> <td style="text-align:right;"> 224.91 </td> <td style="text-align:right;"> 10.81 </td> <td style="text-align:right;"> 20.80 </td> <td style="text-align:right;"> 0.00 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> day </td> <td style="text-align:right;"> -0.23 </td> <td style="text-align:right;"> 0.05 </td> <td style="text-align:right;"> -4.42 </td> <td style="text-align:right;"> 0.00 </td> </tr> </tbody> <tfoot><tr><td style="padding: 0; " colspan="100%"> <sup></sup> Standard errors: OLS</td></tr></tfoot> </table> --- What does this tell us about assumptions of randomization? --- ``` r draft1971 <- read_csv("https://github.com/jnseawright/PS406/raw/main/data/draft1971.csv") ``` --- ``` r boxplot(rank~month, data=draft1971) ``` <img src="3naturalexperiments_files/figure-html/unnamed-chunk-16-1.png" width="70%" style="display: block; margin: auto;" /> --- ``` r draft71lm <- lm(rank ~ day, data=draft1971) ``` --- ``` r summ(draft71lm) ``` <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> Observations </td> <td style="text-align:right;"> 365 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Dependent variable </td> <td style="text-align:right;"> rank </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Type </td> <td style="text-align:right;"> OLS linear regression </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> F(1,363) </td> <td style="text-align:right;"> 0.07 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> R² </td> <td style="text-align:right;"> 0.00 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Adj. R² </td> <td style="text-align:right;"> -0.00 </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;border-bottom: 0;"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:right;"> Est. </th> <th style="text-align:right;"> S.E. </th> <th style="text-align:right;"> t val. </th> <th style="text-align:right;"> p </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> (Intercept) </td> <td style="text-align:right;"> 180.39 </td> <td style="text-align:right;"> 11.08 </td> <td style="text-align:right;"> 16.28 </td> <td style="text-align:right;"> 0.00 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> day </td> <td style="text-align:right;"> 0.01 </td> <td style="text-align:right;"> 0.05 </td> <td style="text-align:right;"> 0.27 </td> <td style="text-align:right;"> 0.79 </td> </tr> </tbody> <tfoot><tr><td style="padding: 0; " colspan="100%"> <sup></sup> Standard errors: OLS</td></tr></tfoot> </table> --- Were randomization issues fixed? --- <img src="images/angrist.JPG" width="85%" style="display: block; margin: auto;" /> --- ### Lottery Winners and Political Attitudes --- <img src="images/Doherty1.png" width="60%" style="display: block; margin: auto;" /> --- <img src="images/Doherty2.png" width="80%" style="display: block; margin: auto;" /> --- <img src="images/Doherty3.png" width="100%" style="display: block; margin: auto;" /> --- Do we think that randomized earnings have the same causal effect as nonrandomized earnings? --- If not, this may be a SUTVA violation; different individuals may be receiving causally different versions of the treatment. --- ### Classic Natural Experiments: Summary **The Big Idea**: Treatment is **"as‑if randomly assigned"** by nature, policy, or accident. **In Potential Outcomes Terms**: `$$(Y_i(1), Y_i(0)) \perp\!\!\!\perp D_i \mid X_i$$` - Treatment assignment \(D_i\) is independent of potential outcomes, **conditional on covariates** \(X_i\) (if needed). - This is exactly the **unconfoundedness (selection on observables)** assumption from Week 2. --- ### What This Means - If the natural experiment is credible, we don't need to control for many (or any) covariates – the "as‑if random" assignment does the work. - In practice, we often check balance on pre‑treatment covariates to support the claim. - The estimand is the **Average Treatment Effect (ATE)** – the same as in a randomized experiment. --- ### Key Conditions for a Valid Classic Natural Experiment 1. **Ignorability / Unconfoundedness**: Treatment is independent of potential outcomes (given covariates, if any). 2. **Overlap**: For every level of covariates, there are both treated and control units. 3. **SUTVA**: No interference, no hidden treatment variations (as in experiments). --- ### Takeaway When nature randomizes, we can analyze the data **as if it came from an experiment** – using difference in means, regression with few controls, or randomization inference. The burden of proof is on the researcher to convince us that the assignment really is **as‑if random**. --- ### IV Natural Experiment 1. "Nature" randomizes a cause of the treatment. - Call the treatment `\(X\)`. - Call the randomized cause of the treatment `\(Z\)`. 2. `\(Z\)` only affects `\(Y\)` through its effects on `\(X\)`. 3. Treatment caused by the randomized cause has the same effect as treatment with any other cause would have. --- ### IV Natural Experiment: Colonial Origins of Development - **Causal question**: Do institutions cause economic development? - **Problem**: Institutions are endogenous – countries with better institutions may differ in unobserved ways (culture, geography, human capital). - **Proposed solution**: Use **settler mortality** as an instrument for institutions. --- ### The Acemoglu, Johnson & Robinson (2001) IV Setup <!-- --> --- - **Instrument** (`\(Z\)`): Settler mortality rates among European colonists in the 17th–19th centuries. - **Treatment** (`\(D\)`): Quality of institutions today (e.g., protection against expropriation). - **Outcome** (`\(Y\)`): Log GDP per capita today. - **Confounder** (`\(U\)`): Unobserved factors like culture, geography, human capital. --- ### The Logic: Why Settler Mortality? AJR's argument: 1. **Where settlers faced high mortality**, they did not settle permanently. Instead, they set up **extractive institutions** (to extract resources) that persisted over time. 2. **Where settlers faced low mortality**, they settled in large numbers and established **institutions protecting property rights** – again, persisting to the present. 3. Settler mortality in the 1600s–1800s might be **exogenous** to modern economic development, except through its effect on institutions. --- ### How the IV Assumptions Are Met (or Argued) | Assumption | Meaning | In the AJR Setting | |------------|---------|---------------------| | **Relevance** | `\(Z\)` predicts `\(D\)` | High settler mortality → extractive institutions → weaker property rights today. First stage is strong. | --- ### How the IV Assumptions Are Met (or Argued) | Assumption | Meaning | In the AJR Setting | |------------|---------|---------------------| | **Exclusion** | `\(Z\)` affects `\(Y\)` only through `\(D\)` | Settler mortality centuries ago has no direct effect on modern GDP, except via the institutions it shaped. (Must defend: no effect through culture, disease environment, etc.) | --- ### How the IV Assumptions Are Met (or Argued) | Assumption | Meaning | In the AJR Setting | |------------|---------|---------------------| | **Independence** | `\(Z\)` is as‑good‑as randomly assigned | Conditional on covariates? Settler mortality is argued to be a historical accident, not correlated with other determinants of development. | --- ### How the IV Assumptions Are Met (or Argued) | Assumption | Meaning | In the AJR Setting | |------------|---------|---------------------| | **Monotonicity** | No defiers | Implicit: Higher mortality doesn't lead to better institutions in some places and worse in others – the effect is consistent in direction. | --- ### The Exclusion Restriction: The Key Challenge The exclusion restriction is the most debated assumption in AJR: - Could high settler mortality affect development **through other channels**? - **Disease environment**: High mortality areas may still have malaria, etc., affecting health and productivity today. - **Culture**: Different settlement patterns may have created different cultural legacies. - **Human capital**: Settlers brought skills; extractive colonies did not. --- AJR address these concerns by: - Controlling for contemporary malaria risk. - Using other instruments (e.g., population density in 1500). - Showing robustness across specifications. --- ### What Does the IV Estimate? Under the assumptions, the IV estimate identifies the **Local Average Treatment Effect (LATE)** for *compliers* – countries whose institutions were changed by settler mortality conditions. These are countries that: - Would have had good institutions if mortality was low. - Would have had bad institutions if mortality was high. - (Not countries that would have had the same institutions regardless.) --- This is exactly the set of countries where colonial settlement patterns mattered – arguably the relevant group for testing the institutional theory. --- <img src="images/settlers1.png" width="520" height="100%" style="display: block; margin: auto;" /> --- <img src="images/settlers2.png" width="968" height="100%" style="display: block; margin: auto;" /> --- <img src="images/settlers3.png" width="948" height="100%" style="display: block; margin: auto;" /> --- <img src="images/settlers4.png" width="80%" style="display: block; margin: auto;" /> --- ### Why This Is a Famous IV Natural Experiment - The instrument is **historical** – clearly pre-dating modern outcomes. - The logic is **theoretically grounded** in theories of colonialism and institutional persistence. - The assumptions are **explicitly stated and debated** – a model of transparent causal inference. - It spawned an entire literature on institutions and development, and remains a standard teaching example for IV. --- ### RDD 1. There is an assignment variable, `\(Z\)`. 2. Cases are assigned to treatment if and only if `\(Z\)` is greater than a predetermined threshold value, `\(T\)`. 3. There are enough cases that lots have scores of `\(Z\)` that are just above and just below `\(T\)`. --- ### Example: Maimonides' Rule > "The number of pupils assigned to each teacher is twenty-five. If > there are fifty, we appoint two teachers. If there are forty, we > appoint an assistant, at the expense of the town." (Baba Bathra, > Chapter II, page 21a; translated by Epstein 1976: 214) --- ### Example: Maimonides' Rule > "Twenty-five children may be put in charge of one teacher. If the > number in the class exceeds twenty-five but is not more than forty, he > should have an assistant to help with the instruction. If there are > more than forty, two teachers must be appointed." (Maimonides, given > in Hyamson 1937: 58b) --- ### Example: Maimonides' Rule - Maimonides' Rule is used to determine class sizes in Israel. - Angrist and Lavy (1999) use this to carry out an RDD analysis of the effects of class size on educational outcomes. --- ### Example: Maimonides' Rule <img src="images/maimonverb.jpeg" width="65%" style="display: block; margin: auto;" /> --- ### Example: Maimonides' Rule <img src="images/maimonmath.jpeg" width="65%" style="display: block; margin: auto;" /> --- ### Example: Maimonides' Rule If the RDD is a success, then the groups just above and below the cutpoint on `\(Z\)` should be balanced, both in terms of the number of cases and in terms of any measured background variables. 1. It may be a good idea to do a balance test between treatment and control cases within a bandwidth around the cutpoint. 2. At the very least, look at a histogram of cases to check that there are about the same number of cases just above and just below the threshold. --- - Can Maimonides' Rule be manipulated? What would manipulation look like here? --- <img src="images/Angrist2019title.PNG" width="85%" style="display: block; margin: auto;" /> --- <img src="images/Angrist2019-1.PNG" width="85%" style="display: block; margin: auto;" /> --- <img src="images/Angrist2019-2.PNG" width="85%" style="display: block; margin: auto;" /> --- ### Regression Discontinuity Design: Summary **The Big Idea**: Treatment is assigned by a cutoff on a continuous running variable. Comparing units just above and just below the cutoff mimics randomization. **In Potential Outcomes Terms**: `$$\lim_{x \downarrow c} E[Y(0) | X=x] = \lim_{x \uparrow c} E[Y(0) | X=x]$$` - This is the **continuity assumption**: Potential outcomes are continuous at the cutoff. - Under continuity, any jump in the observed outcome at \(c\) is the causal effect. --- ### What RDD Estimates - **Sharp RDD**: The average treatment effect for units at the cutoff: `$$\tau_{SRD} = E[Y(1) - Y(0) | X = c]$$` - **Fuzzy RDD**: The LATE for compliers at the cutoff: `$$\tau_{FRD} = \frac{\lim_{x\downarrow c} E[Y|X=x] - \lim_{x\uparrow c} E[Y|X=x]}{\lim_{x\downarrow c} E[D|X=x] - \lim_{x\uparrow c} E[D|X=x]}$$` --- ### Key Takeaways | What makes a good RDD? | What threatens validity? | |------------------------|--------------------------| | Clear, non-manipulable cutoff | Precise manipulation of running variable | | Many observations near cutoff | Discontinuities in covariates | | Graphical evidence of jump | Other treatments changing at cutoff | | Robustness to bandwidth choice | Discontinuity in density (McCrary test) | | Balance on pre-treatment covariates | Model dependence (global polynomials) | --- ### The RDD Credibility Checklist When reading or conducting an RDD study, ask: ☐ **Is there a clear cutoff?** Is treatment assignment deterministic (or fuzzy) at that point? ☐ **Is there evidence of manipulation?** Density test, heaping, sorting? ☐ **Are covariates balanced?** Do pre-treatment variables jump at the cutoff? --- ### The RDD Credibility Checklist ☐ **Is the graphical evidence convincing?** Plot with bins, fits, and confidence bands. ☐ **Are results robust?** Check bandwidth sensitivity, placebo cutoffs, polynomial order. ☐ **Is the interpretation precise?** RDD estimates the effect *at the cutoff* – does that generalize? --- ### Difference-in-Differences: A Natural Experiment Workhorse - **Research design**: Compare the change in outcomes for a treated group to the change in outcomes for an untreated comparison group. - **Why it's a natural experiment**: Treatment assignment is "as-if random" conditional on common trends – nature (or policy) determines who gets treated and when. --- **The canonical setup**: - Two periods: Pre-treatment (\(t=1\)) and post-treatment (\(t=2\)) - Two groups: Treated (\(D=1\)) and untreated (\(D=0\)) - Treatment occurs between periods for the treated group only. --- ### The DiD Estimand In potential outcomes notation: `$$ATT = E[Y_{i,2}(1) - Y_{i,2}(0) | D_i = 1]$$` But \(Y_{i,2}(0)\) is unobserved for treated units. DiD imputes it using: `$$\hat{Y}_{i,2}(0) = Y_{i,1} + \underbrace{(E[Y_{j,2}|D=0] - E[Y_{j,1}|D=0])}_{\text{common trend}}$$` --- **The DiD estimator**: `$$\hat{\tau}_{DiD} = (\bar{Y}_{T,2} - \bar{Y}_{T,1}) - (\bar{Y}_{C,2} - \bar{Y}_{C,1})$$` --- ### The Parallel Trends Assumption **Core identifying assumption**: In the absence of treatment, the treated and control groups would have followed the same trend. Formally: `$$\small E[Y_{i,2}(0) - Y_{i,1}(0) | D_i = 1] = E[Y_{i,2}(0) - Y_{i,1}(0) | D_i = 0]$$` --- **What this means**: - Levels can differ (that's why we use changes) - The path of outcomes would have been parallel - Any violation (e.g., differential economic shocks) biases the estimate --- ### Visualizing Parallel Trends: A Simulation <!-- --> --- - The dashed line shows the **counterfactual** trend for treated units (parallel to control) - The gap between observed and counterfactual in period 2 is the **treatment effect** --- ### The Canonical DiD Example: Card and Krueger (1994) - **Question**: Does raising the minimum wage reduce employment? - **Setting**: New Jersey raised minimum wage; Pennsylvania did not. - **Data**: Fast food restaurants in both states, before and after the policy. --- ### The Canonical DiD Example: Card and Krueger (1994) ``` r library(dplyr) library(tidyr) library(knitr) library(kableExtra) data_path <- file.path("data", "CK1994.csv") ck <- read.csv(data_path, na.strings = c(".", "")) # missing values are coded as "." ck <- ck %>% mutate( total_emp = empft + emppt + nmgrs, # Label groups state_name = ifelse(state == 1, "New Jersey (treated)", "Pennsylvania (control)"), period = ifelse(time == 0, "Before", "After") ) ``` --- ``` r # Keep only complete cases for the three employment components ck_clean <- ck %>% filter(!is.na(empft) & !is.na(emppt) & !is.na(nmgrs)) # Calculate mean employment by state and period means <- ck_clean %>% group_by(state_name, period) %>% summarise(mean_emp = mean(total_emp, na.rm = TRUE), .groups = "drop") # Pivot to wide format for easy differencing means_wide <- means %>% pivot_wider(names_from = period, values_from = mean_emp) ``` --- ``` r # Compute differences (After - Before) means_wide <- means_wide %>% mutate(Difference = After - Before) # Extract DiD estimate did_estimate <- means_wide$Difference[means_wide$state_name == "New Jersey (treated)"] - means_wide$Difference[means_wide$state_name == "Pennsylvania (control)"] # Create a clean presentation table table_did <- means_wide %>% select(state_name, Before, After, Difference) %>% rename(State = state_name) # Add a row for the DiD estimate table_did <- bind_rows( table_did, tibble( State = "Difference-in-Differences", Before = NA, After = NA, Difference = did_estimate ) ) ``` --- ``` r # Format numbers (round to one decimal) table_did <- table_did %>% mutate( Before = round(Before, 1), After = round(After, 1), Difference = round(Difference, 1) ) ``` --- ``` r # Display the table (for RMarkdown slide) kable(table_did, caption = "Effect of Minimum Wage Increase on Employment (Card & Krueger 1994)", align = "lccc") %>% kable_styling(full_width = FALSE) # optional, requires kableExtra package ``` <table class="table" style="width: auto !important; margin-left: auto; margin-right: auto;"> <caption>Effect of Minimum Wage Increase on Employment (Card & Krueger 1994)</caption> <thead> <tr> <th style="text-align:left;"> State </th> <th style="text-align:center;"> Before </th> <th style="text-align:center;"> After </th> <th style="text-align:center;"> Difference </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;"> New Jersey (treated) </td> <td style="text-align:center;"> 29.9 </td> <td style="text-align:center;"> 30.2 </td> <td style="text-align:center;"> 0.3 </td> </tr> <tr> <td style="text-align:left;"> Pennsylvania (control) </td> <td style="text-align:center;"> 33.2 </td> <td style="text-align:center;"> 31.2 </td> <td style="text-align:center;"> -2.0 </td> </tr> <tr> <td style="text-align:left;"> Difference-in-Differences </td> <td style="text-align:center;"> NA </td> <td style="text-align:center;"> NA </td> <td style="text-align:center;"> 2.3 </td> </tr> </tbody> </table> --- ### The Canonical DiD Example: Card and Krueger (1994) - Result: Employment **increased** in NJ relative to PA – contrary to traditional economic theory. - Sparked decades of debate about the parallel trends assumption. --- ### Threats to Parallel Trends 1. **Differential time shocks**: PA had a recession; NJ boomed. 2. **Composition changes**: Different types of restaurants open/close. 3. **Ashenfelter's dip**: Units select into treatment after a temporary decline. 4. **Anticipation effects**: Units change behavior before treatment. --- **Solutions**: - Add unit-specific time trends - Use multiple pre-treatment periods to test for parallel pre-trends - Include covariates (conditional parallel trends) --- ### Synthetic Control: When No Single Unit Is a Good Comparison - **Problem**: In comparative case studies (e.g., California's Proposition 99), no single state provides a perfect counterfactual. - **Solution**: Construct a **synthetic control** – a weighted average of donor units that matches the treated unit on pre-treatment outcomes and covariates. **The big idea**: Let the data choose the optimal comparison group. --- ### The Synthetic Control Estimand For a treated unit \(j=1\) and donor units \(j=2,...,J+1\): `$$\hat{Y}_{1t}(0) = \sum_{j=2}^{J+1} w_j Y_{jt}$$` where weights \(w_j \geq 0\) and \(\sum w_j = 1\). --- We choose weights to minimize: `$$\sum_{m=1}^{M} v_m (X_{1m} - \sum_{j=2}^{J+1} w_j X_{jm})^2$$` - \(X\) includes pre-treatment outcomes and covariates - \(v_m\) are predictor importance weights (chosen to minimize pre-treatment MSPE) --- ### The Canonical Example: California Proposition 99 (Abadie et al. 2010) - **Intervention**: California passed tobacco tax Proposition 99 in 1988. - **Question**: Did it reduce cigarette consumption? - **Donor pool**: 38 states without similar legislation. - **Predictors**: Pre-treatment cigarette sales, income, beer consumption, age composition. --- ``` r library(tidysynth) smoking_out <- smoking %>% synthetic_control(outcome = cigsale, unit = state, time = year, i_unit = "California", i_time = 1988, generate_placebos = TRUE) %>% generate_predictor(time_window = 1980:1988, ln_income = mean(lnincome, na.rm = TRUE), ret_price = mean(retprice, na.rm = TRUE), youth = mean(age15to24, na.rm = TRUE)) %>% generate_predictor(time_window = 1984:1988, beer_sales = mean(beer, na.rm = TRUE)) %>% generate_predictor(time_window = 1975, cigsale_1975 = cigsale) %>% generate_predictor(time_window = 1980, cigsale_1980 = cigsale) %>% generate_predictor(time_window = 1988, cigsale_1988 = cigsale) %>% generate_weights(optimization_window = 1970:1988) %>% generate_control() ``` --- ### Visualizing the Synthetic Control ``` r # Plot the results smoking_out %>% plot_trends() ``` <!-- --> --- ### Visualizing the Synthetic Control ``` r # Examine weights smoking_out %>% plot_weights() ``` <!-- --> --- ### Visualizing the Synthetic Control ``` r # Check balance smoking_out %>% grab_balance_table() ``` ``` ## # A tibble: 7 × 4 ## variable California synthetic_California donor_sample ## <chr> <dbl> <dbl> <dbl> ## 1 ln_income 10.1 9.86 9.83 ## 2 ret_price 89.4 89.3 87.3 ## 3 youth 0.174 0.174 0.173 ## 4 beer_sales 24.3 24.1 23.7 ## 5 cigsale_1975 127. 127. 137. ## 6 cigsale_1980 120. 120. 138. ## 7 cigsale_1988 90.1 91.4 114. ``` --- ### Visualizing the Synthetic Control - **Pre-treatment fit**: Synthetic and observed California should track closely. - **Post-treatment divergence**: The gap is the estimated treatment effect. - **Weights**: Which states contribute to the synthetic control? (Often just a few.) --- ### The Synthetic Control Advantage **Why synthetic control often beats DiD**: 1. **Transparency**: We see exactly which units form the counterfactual. 2. **No extrapolation**: Weights are non-negative and sum to one. 3. **Data-driven**: No researcher discretion in selecting comparison units. 4. **Visual placebo tests**: We can apply the same method to every donor unit. --- ### Synthetic Control: Key Takeaways | What makes a good SC? | What threatens validity? | |------------------------|--------------------------| | Long pre-treatment period | Interpolation bias (donors too different) | | Good pre-treatment fit | Structural breaks in donors | | Diverse donor pool | Treatment diffusion to donors | | Plausible exclusion of treated unit's influencers | Anticipation effects | --- **In potential outcomes terms**: SC imputes the counterfactual using a weighted average of untreated units, chosen to match pre-treatment characteristics and outcomes. --- ### Natural Experiments: Key Takeaways | Design | Identification Strategy | Canonical Example | |--------|------------------------|--------------------| | **Classic NE** | As-if random treatment | Snow (cholera), Draft lottery | | **IV-type NE** | As-if random instrument | AJR (settler mortality) | | **RDD** | Discontinuity at cutoff | Maimonides' Rule | | **DiD** | Parallel trends | Card & Krueger (min wage) | | **Synthetic Control** | Weighted donor pool | CA Prop 99 (tobacco tax) | --- ### Natural Experiments: Key Takeaways **Common thread**: Each design uses **design** rather than statistical adjustment to approximate randomization. The credibility of any natural experiment rests on the plausibility of its identifying assumptions. --- **Next week**: We dive deep into **Instrumental Variables** – the workhorse for IV-type natural experiments and many other settings.