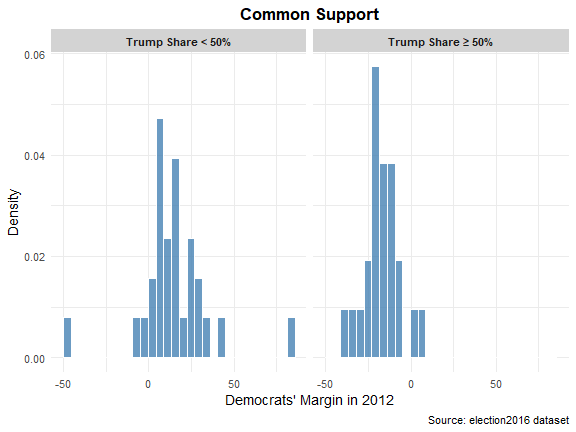

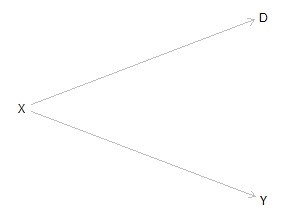

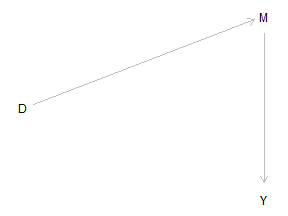

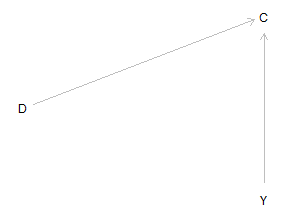

class: center, middle, inverse, title-slide .title[ # 2: Regression for Causal Inference ] .subtitle[ ## Quantitative Causal Inference ] .author[ ### <large>Jaye Seawright</large> ] .institute[ ### <small>Northwestern Political Science</small> ] .date[ ### April 9 and 14, 2026 ] --- class: center, middle ### Why Regression? From Experiments to Observational Studies - In an experiment, random assignment ensures that treatment is independent of potential outcomes. - In observational data, we worry that treated and control units differ on other characteristics (confounders). - Regression attempts to **adjust for those differences** by holding confounders constant. --- ### The Intuition: Adjusting for a Confounder ``` r lm_biased <- lm(Stateterrorism ~ TrumpShare, data = election2016) coef(lm_biased)["TrumpShare"] ``` ``` ## TrumpShare ## -0.2110919 ``` ``` r # Adjust for past election and Turnout lm_adjusted <- lm(Stateterrorism ~ TrumpShare + Turnout16 + Turnout12 + D12Margin, data = election2016) coef(lm_adjusted)["TrumpShare"] ``` ``` ## TrumpShare ## 0.02730885 ``` --- - The coefficient changes because we are now comparing treated and control units having subtracted off the estimated effects of turnout and electoral history. - This is the core idea: **control for confounders to mimic randomization**. --- ### When Does This Work? The Key Assumption **Unconfoundedness (selection on observables)** `$$D_i \perp\!\!\!\perp (Y_i(0), Y_i(1)) \mid X_i$$` --- - Conditional on observed covariates \(X\), treatment is as good as randomly assigned. - This means: no unmeasured confounders that affect both treatment and outcome. --- How reasonable is this assumption in observational studies? For example, does the Trump and terrorism regression plausibly meet this standard? (What would need to be true?) --- **Overlap / Common Support** For every combination of values of \(X\), there must be both treated and control units. --- Let's use a dichotomous version of TrumpSupport, splitting states into majority/minority Trump states, and consider the distributions of D12Margin in each category. --- ``` r # Side-by-side histograms using facet_wrap election2016 <- election2016 %>% mutate(TrumpGroup = ifelse(TrumpShare >= 50, "Trump Share ≥ 50%", "Trump Share < 50%")) p <- ggplot(election2016, aes(x = D12Margin)) + geom_histogram( aes(y = after_stat(density)), # use density for easy comparison bins = 30, # adjust bin width as needed fill = "steelblue", # attractive fill color color = "white", # white border for bins alpha = 0.8 # slight transparency ) + facet_wrap(~ TrumpGroup, ncol = 2) + # two panels side by side labs( title = "Common Support", x = "Democrats' Margin in 2012", y = "Density", caption = "Source: election2016 dataset" ) + theme_minimal(base_size = 14) + # clean minimal theme theme( strip.background = element_rect(fill = "lightgray", color = NA), strip.text = element_text(face = "bold"), plot.title = element_text(hjust = 0.5, face = "bold") ) ``` --- <!-- --> --- - Clearly, states with strong Democratic margins in 2012 are only in the control group and states with very negative Democratic margins in 2012 are basically only in the treatment group. We don't have real common support here! - We should focus mostly on swing states where counterfactuals actually make sense. --- ### Visualizing Confounding with DAGs <!-- --> --- - \(X\) is a confounder: it affects both treatment \(D\) and outcome \(Y\). - Controlling for \(X\) blocks the backdoor path `\(D \leftarrow X \rightarrow Y\)`. - This gives us the causal effect of `\(D\)` on `\(Y\)`. --- ### What Does "Controlling For" Mean? - Intuitively: we are asking what the relationship between treatment and outcome would be if past elections were held constant. - Mathematically: regression uses the variation in treatment that is **not explained by past elections** to estimate the effect. **Key idea**: Including a confounder removes its influence from both treatment and outcome, reducing bias. --- ### When Is a Variable a Confounder? A confounder is a variable that: 1. Affects the treatment, 2. Affects the outcome, 3. Is not itself affected by the treatment. --- ### Good Controls: Block Backdoor Paths Any variable that lies on a backdoor path from `\(D\)` to `\(Y\)` and is not itself a descendant of `\(D\)` is a **good control**. Example from the terrorism study: `past turnout` and `voting history` are plausible confounders – they might affect both vote choice in 2016 and subsequent terrorism. --- ### Bad Controls: Mediators <!-- --> --- - `\(M\)` is a **mediator**: it lies on the causal path from `\(D\)` to `\(Y\)`. - Controlling for `\(M\)` would **block part of the causal effect** we want to estimate. - This is a form of **overcontrol bias**. In the Peru study, if `enojado` (anger) is a mediator, we should **not** control for it when estimating the total effect of `simpletreat` on voting. --- ### Bad Controls: Colliders <!-- --> --- - `\(C\)` is a **collider**: it is caused by both `\(D\)` and `\(Y\)`. - Conditioning on a collider **opens a non‑causal path** `\(D \rightarrow C \leftarrow Y\)`, creating **selection bias**. --- - Example: if we control for a variable that is itself affected by treatment and outcome (e.g., turnout in 2020 in the terrorism example), we can introduce bias. --- ### Bad Controls: Post‑Treatment Variables Any variable that is caused by the treatment is **post‑treatment**. Controlling for such a variable can: - Block part of the causal effect (if it is a mediator), - Create collider bias (if it is also affected by other factors), - Generally lead to incorrect estimates. --- **Rule of thumb**: Only control for variables measured **before** treatment. --- ### Translating DAGs to Regression Practice When building a regression model for causal inference: 1. **List all potential confounders** – variables that affect both treatment and outcome. 2. **Draw a DAG** (even informally) to check for backdoor paths. 3. **Do not control for mediators or colliders**. 4. **Do not control for post‑treatment variables**. 5. **Include interactions if you suspect heterogeneous effects** (more on this later). --- ### The Problem of Unobserved Confounders We can only control for variables we have measured. If an important confounder is unmeasured, our estimate may still be biased. This is why **sensitivity analysis** is crucial – we need to ask: how strong would an unmeasured confounder have to be to overturn our conclusion? (We will return to this in later weeks.) --- ### Summary: Regression for Causal Inference - Regression can reduce bias by adjusting for confounders. - Use DAGs to decide which variables to control for. - Avoid bad controls: mediators, colliders, post‑treatment variables. - Unobserved confounders remain a threat – be humble. - Heterogeneous effects mean the regression coefficient is a weighted average; explore variation. --- Let's see how this works in practice in an excellent application, the study of long-term effects of victimization that is one of the optional readings for this week. --- <img src="images/luputitleauthor.JPG" width="90%" /> --- <img src="images/lupu1.JPG" width="90%" /> --- <img src="images/lupu2.JPG" width="90%" /> --- <img src="images/lupu3.JPG" width="90%" /> --- <img src="images/lupu4.JPG" width="70%" /> --- ``` r library(dagitty) ``` --- Let's assemble a DAG that captures what appears to be the theoretical intuition behind Lupu and Peisahkin's analysis. --- ``` r LupuPeisahkinDAG1 <- dagitty( "dag {Dekulakized -> Victimization PreSovietWealth -> Victimization SovietOpposition -> Victimization PreSovietReligiosity -> Victimization PriorRegion -> Victimization DeportationRegion -> Victimization DeportationRegion -> Religiosity PriorRegion -> Religiosity PreSovietReligiosity -> Religiosity SovietOpposition -> Religiosity PreSovietWealth -> Religiosity Victimization -> Religiosity Dekulakized -> Religiosity}" ) ``` --- ``` r plot( LupuPeisahkinDAG1 ) ``` <img src="2regression_files/figure-html/unnamed-chunk-8-1.png" width="50%" /> --- * In this initial setup, there are a lot of variables that are confounders for the main relationship of interest, between Victimization and Religiosity. Nothing is post-treatment, etc. * Is this the only plausible causal framework? What about... --- ``` r LupuPeisahkinDAG2 <- dagitty( "dag {Dekulakized <- Victimization PreSovietWealth -> Victimization SovietOpposition -> Victimization PreSovietReligiosity -> Victimization PriorRegion -> Victimization DeportationRegion <- Victimization DeportationRegion -> Religiosity PriorRegion -> Religiosity PreSovietReligiosity -> Religiosity SovietOpposition -> Religiosity PreSovietWealth -> Religiosity Victimization -> Religiosity Dekulakized -> Religiosity}" ) ``` --- ``` r plot( LupuPeisahkinDAG2 ) ``` <img src="2regression_files/figure-html/unnamed-chunk-10-1.png" width="50%" /> --- * Here, Victimization causes DeportationRegion and Dekulakized. How does this change the appropriate set of control variables? --- ``` r adjustmentSets(LupuPeisahkinDAG1, exposure = "Victimization", outcome = "Religiosity") ``` ``` ## { Dekulakized, DeportationRegion, PreSovietReligiosity, ## PreSovietWealth, PriorRegion, SovietOpposition } ``` ``` r adjustmentSets(LupuPeisahkinDAG2, exposure = "Victimization", outcome = "Religiosity") ``` ``` ## { PreSovietReligiosity, PreSovietWealth, PriorRegion, SovietOpposition ## } ``` --- ``` r impliedConditionalIndependencies(LupuPeisahkinDAG1) ``` ``` ## Dklk _||_ DprR ## Dklk _||_ PrSR ## Dklk _||_ PrSW ## Dklk _||_ PrrR ## Dklk _||_ SvtO ## DprR _||_ PrSR ## DprR _||_ PrSW ## DprR _||_ PrrR ## DprR _||_ SvtO ## PrSR _||_ PrSW ## PrSR _||_ PrrR ## PrSR _||_ SvtO ## PrSW _||_ PrrR ## PrSW _||_ SvtO ## PrrR _||_ SvtO ``` --- Are presidential regimes especially vulnerable to democratic backsliding? Let's try to get a regression-based answer as an application of what we've learned. --- <img src="2regression_files/figure-html/unnamed-chunk-13-1.png" width="50%" /> --- ``` r library(tidyverse) ``` --- ``` r qog_std_ts_jan22 <- read_csv("https://github.com/jnseawright/PS406/raw/main/data/qog_std_ts_jan22.csv") qogdems2000 <- qog_std_ts_jan22 %>% filter(year==2000 & vdem_libdem > 0.5) qog2000demsin2020 <- qog_std_ts_jan22 %>% filter(year==2020 & cname %in% qogdems2000$cname) preslm <- lm(vdem_libdem ~ br_pres, data=qog2000demsin2020) ``` --- ``` r summ(preslm) ``` <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> Observations </td> <td style="text-align:right;"> 61 (1 missing obs. deleted) </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Dependent variable </td> <td style="text-align:right;"> vdem_libdem </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Type </td> <td style="text-align:right;"> OLS linear regression </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> F(1,59) </td> <td style="text-align:right;"> 2.05 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> R² </td> <td style="text-align:right;"> 0.03 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Adj. R² </td> <td style="text-align:right;"> 0.02 </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;border-bottom: 0;"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:right;"> Est. </th> <th style="text-align:right;"> S.E. </th> <th style="text-align:right;"> t val. </th> <th style="text-align:right;"> p </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> (Intercept) </td> <td style="text-align:right;"> 0.71 </td> <td style="text-align:right;"> 0.03 </td> <td style="text-align:right;"> 27.19 </td> <td style="text-align:right;"> 0.00 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> br_pres </td> <td style="text-align:right;"> -0.06 </td> <td style="text-align:right;"> 0.04 </td> <td style="text-align:right;"> -1.43 </td> <td style="text-align:right;"> 0.16 </td> </tr> </tbody> <tfoot><tr><td style="padding: 0; " colspan="100%"> <sup></sup> Standard errors: OLS</td></tr></tfoot> </table> --- ``` r preslm2 <- lm(vdem_libdem ~ br_pres + as.factor(ht_colonial) + ccp_systyear + br_pvote, data=qog2000demsin2020) ``` --- ``` r summ(preslm2) ``` <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> Observations </td> <td style="text-align:right;"> 58 (4 missing obs. deleted) </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Dependent variable </td> <td style="text-align:right;"> vdem_libdem </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Type </td> <td style="text-align:right;"> OLS linear regression </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;"> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> F(9,48) </td> <td style="text-align:right;"> 3.30 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> R² </td> <td style="text-align:right;"> 0.38 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> Adj. R² </td> <td style="text-align:right;"> 0.27 </td> </tr> </tbody> </table> <table class="table table-striped table-hover table-condensed table-responsive" style="width: auto !important; margin-left: auto; margin-right: auto;border-bottom: 0;"> <thead> <tr> <th style="text-align:left;"> </th> <th style="text-align:right;"> Est. </th> <th style="text-align:right;"> S.E. </th> <th style="text-align:right;"> t val. </th> <th style="text-align:right;"> p </th> </tr> </thead> <tbody> <tr> <td style="text-align:left;font-weight: bold;"> (Intercept) </td> <td style="text-align:right;"> 2.50 </td> <td style="text-align:right;"> 0.76 </td> <td style="text-align:right;"> 3.29 </td> <td style="text-align:right;"> 0.00 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> br_pres </td> <td style="text-align:right;"> 0.03 </td> <td style="text-align:right;"> 0.04 </td> <td style="text-align:right;"> 0.76 </td> <td style="text-align:right;"> 0.45 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> as.factor(ht_colonial)1 </td> <td style="text-align:right;"> -0.31 </td> <td style="text-align:right;"> 0.14 </td> <td style="text-align:right;"> -2.26 </td> <td style="text-align:right;"> 0.03 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> as.factor(ht_colonial)2 </td> <td style="text-align:right;"> -0.09 </td> <td style="text-align:right;"> 0.07 </td> <td style="text-align:right;"> -1.42 </td> <td style="text-align:right;"> 0.16 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> as.factor(ht_colonial)5 </td> <td style="text-align:right;"> -0.12 </td> <td style="text-align:right;"> 0.05 </td> <td style="text-align:right;"> -2.28 </td> <td style="text-align:right;"> 0.03 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> as.factor(ht_colonial)6 </td> <td style="text-align:right;"> -0.24 </td> <td style="text-align:right;"> 0.11 </td> <td style="text-align:right;"> -2.26 </td> <td style="text-align:right;"> 0.03 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> as.factor(ht_colonial)7 </td> <td style="text-align:right;"> -0.13 </td> <td style="text-align:right;"> 0.08 </td> <td style="text-align:right;"> -1.59 </td> <td style="text-align:right;"> 0.12 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> as.factor(ht_colonial)9 </td> <td style="text-align:right;"> -0.07 </td> <td style="text-align:right;"> 0.13 </td> <td style="text-align:right;"> -0.49 </td> <td style="text-align:right;"> 0.63 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> ccp_systyear </td> <td style="text-align:right;"> -0.00 </td> <td style="text-align:right;"> 0.00 </td> <td style="text-align:right;"> -2.36 </td> <td style="text-align:right;"> 0.02 </td> </tr> <tr> <td style="text-align:left;font-weight: bold;"> br_pvote </td> <td style="text-align:right;"> 0.04 </td> <td style="text-align:right;"> 0.05 </td> <td style="text-align:right;"> 0.95 </td> <td style="text-align:right;"> 0.35 </td> </tr> </tbody> <tfoot><tr><td style="padding: 0; " colspan="100%"> <sup></sup> Standard errors: OLS</td></tr></tfoot> </table> --- ### Is it better to include all but one control variable, or have fewer? It turns out, maybe surprisingly, that there isn't just one answer to this question. * Sometimes, you will get an estimate with less bias by leaving out valid control variables from an incomplete model. * Sometimes you will do better by including as many control variables as you can even if you don't know them all. * We don't know which scenario is which in general. --- ### How Much Does It Hurt to Be Wrong? Suppose we know we can get a good causal inference from a regression with two control variables and our treatment, but we don't have access to any way of measuring one of those control variables. We have two options: 1. Estimate a regression including the treatment and one control variable. 2. Estimate a bivariate regression including only the treatment. --- Both of these options will have omitted variable bias. 1. The first option will have the bias due to leaving out the unmeasurable variable. 2. The second option will have the bias due to leaving out the unmeasurable variable, and the bias due to leaving out the measured control variable. --- These two sources of bias can point in the same direction, in which scenario the second option will usually be worse than the first. Or the two sources of bias can point in opposite directions, in which scenario the second option can often be better than the first. --- <img src="images/Clarke1.png" width="90%" /> --- <img src="images/Clarke2.png" width="90%" /> --- ## When Does Adding a Control Variable Help? - Adding a control variable reduces overall bias when the still-omitted variables and the newly added variable have effects on the outcome that point in the same direction and are positively correlated with each other. - Adding it increases bias when those two quantities have opposite signs, or when they have the same sign but are negatively correlated. The new control pulls the estimate the wrong way relative to what's still missing. --- - Since we rarely know the signs and correlations of unmeasured confounders, this is largely a judgment call. As Clarke emphasizes, the two scenarios are roughly equally common in principle. --- ### Regression for Causal Inference - If the causal effect is not constant across all cases, regression will not give a consistent estimate of the average treatment effect. - Instead, it estimates a covariance-adjusted weighted average of cases' treatment effects. --- Part of our goal in this seminar is to explore advanced techniques. You don't always have to implement each of these, but learning about them can give you good ideas and broaden your skill set! --- ### Aronow and Samii 2016 <img src="images/aronowsamii1.JPG" width="90%" /> --- ### Aronow and Samii 2016 <img src="images/aronowsamii2.JPG" width="90%" /> --- ### Aronow and Samii 2016 <img src="images/aronowsamii3.JPG" width="90%" /> --- ### Chattopadhyay and Zubizarreta 2023 <img src="images/Chattopadhyay1.png" width="90%" /> --- ### Chattopadhyay and Zubizarreta 2023 `$$\hat{\tau}_{OLS} = \sum_{i:D_{i}=1} w_{i} Y_{i} - \sum_{i:D_{i}=0} w_{i} Y_{i}$$` --- ### Chattopadhyay and Zubizarreta 2023 There is a different set of weights called the ''multi-regression imputation'' or MRI: `$$\hat{\tau}_{MRI} = \sum_{i:D_{i}=1} w^{MRI}_{i}(\bar{X}) Y_{i} - \sum_{i:D_{i}=0} w^{MRI}_{i}(\bar{X}) Y_{i}$$` `$$w^{MRI}_{i}(x) = n^{−1}_{T} + (X_{i} − \bar{X}_{T})^{T}S^{−1}_{T}(x − \bar{X}_{T})$$` --- ### Chattopadhyay and Zubizarreta 2023 If there is causal heterogeneity, the OLS weights are a biased estimator of ATE, but the MRI weights can be an unbiased estimator. --- ``` r library(lmw) qog2000demsin2020clean <- qog2000demsin2020 %>% filter(!is.na(br_pres) & !is.na(ccp_systyear) & !is.na(ht_colonial) & !is.na(br_pvote)) ``` --- ``` r preslmw.uri <- lmw(~ br_pres + as.factor(ht_colonial) + br_pvote, data = qog2000demsin2020clean, estimand = "ATT", method = "URI", treat = "br_pres") preslmw.urifit <- lmw_est(preslmw.uri, outcome = "vdem_libdem") summary(preslmw.urifit) ``` ``` ## ## Effect estimates: ## Estimate Std. Error 95% CI L 95% CI U t value Pr(>|t|) ## E[Y₁-Y₀|A=1] 0.02045 0.03772 -0.05535 0.09625 0.542 0.59 ## ## Residual standard error: 0.1371 on 49 degrees of freedom ``` --- ``` r preslmw.mri <- lmw(~ br_pres + as.factor(ht_colonial) + br_pvote, data = qog2000demsin2020clean, estimand = "ATT", method = "MRI", treat = "br_pres") preslmw.mrifit <- lmw_est(preslmw.mri, outcome = "vdem_libdem") summary(preslmw.mrifit) ``` ``` ## ## Effect estimates: ## Estimate Std. Error 95% CI L 95% CI U t value Pr(>|t|) ## E[Y₁-Y₀|A=1] 0.02144 0.03880 -0.05667 0.09955 0.552 0.583 ## ## Residual standard error: 0.1396 on 46 degrees of freedom ## ## Potential outcome means: ## Estimate Std. Error 95% CI L 95% CI U t value Pr(>|t|) ## E[Y₀|A=1] 0.62856 0.03964 0.54877 0.70835 15.86 <2e-16 *** ## E[Y₁|A=1] 0.65000 0.03184 0.58590 0.71410 20.41 <2e-16 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ``` --- ``` r plot(preslmw.mri) ``` <img src="2regression_files/figure-html/unnamed-chunk-28-1.png" width="60%" />